Neuromorphic computers – intended to mimic more directly how the human brain works – hold exciting promise but for the most part remain machines in development. Most simulations of brain neural networks are run on traditional HPC resources. These latter systems, of course, can struggle with such simulations and certainly can’t approach the power efficiency achieved by the human brain (~10-20 watts). A recent comparison study suggests one neuromorphic platform – SpiNNaker – is now able to perform a type of neural network simulation typically done on an HPC system and that this advance signals its suitability for broader use in research.

“SpiNNaker can support detailed biological models of the cortex – the outer layer of the brain that receives and processes information from the senses – delivering results very similar to those from an equivalent supercomputer software simulation,” said Sacha van Albada, an author of the study and leader of the Theoretical Neuroanatomy group at the Jülich Research Centre, Germany. “The ability to run large-scale detailed neural networks quickly and at low power consumption will advance robotics research and facilitate studies on learning and brain disorders.”

An account of the work (Breakthrough in construction of computers for mimicking human brain) was recently posted on the European Human Brain Project website. In this project researchers used NEST, a neural network simulation software widely used on HPC systems. Details of the steps taken to adapt NEST to run on SpiNNaker are discussed in a paper published in Frontiers in Neuroscience.[I] The scale-up was enabled by recent developments in the SpiNNaker software stack that allow simulations to be spread across multiple boards. It’s the largest simulation yet run on SpiNNaker.

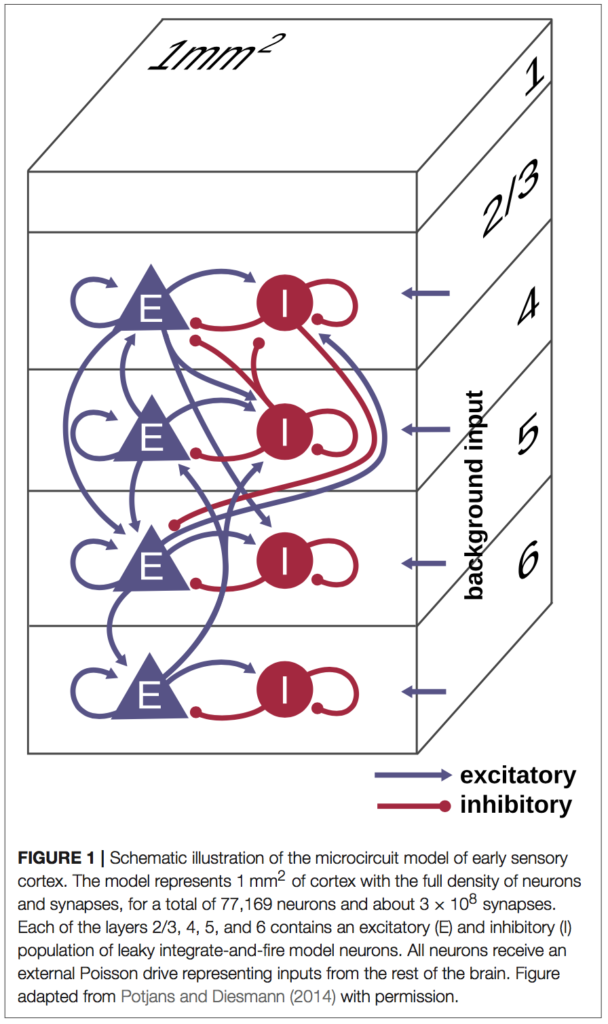

The researchers chose to model a so-called cortical microcircuit which is regarded “as unit cell of cortex repeated to cover larger areas of cortical surface and different cortical areas. The model represents the full density of connectivity in 1 mm2 of the cortical sheet by about 80,000 leaky integrate-and- fire (LIF) model neurons and 0.3 billion synapses. This is the smallest network size where a realistic number of synapses and a realistic connection probability are simultaneously achieved,” according to the paper.

The researchers chose to model a so-called cortical microcircuit which is regarded “as unit cell of cortex repeated to cover larger areas of cortical surface and different cortical areas. The model represents the full density of connectivity in 1 mm2 of the cortical sheet by about 80,000 leaky integrate-and- fire (LIF) model neurons and 0.3 billion synapses. This is the smallest network size where a realistic number of synapses and a realistic connection probability are simultaneously achieved,” according to the paper.

There are, of course, many approaches to building neuromorphic computers, all seeking to emulate the high performance and low power consumption characteristics of human brain function. One approach is to literally etch neuron-like structures in silicon. This is done by the BrainScaleS Project. Another approach uses traditional digital parts to create neuron-like circuits. This is the tack taken by SpiNNaker which uses ARM9 cores and on-chip routers to implement a spiking neural network architecture (see SpiNNaker architecture). The SpiNNaker project is based at the University of Manchester, UK.

“[T]he present work demonstrates the usability of SpiNNaker for large-scale neural network simulations with short neurobiological time scales and compares its performance in terms of accuracy, runtime, and power consumption with that of the simulation software NEST…The result constitutes a breakthrough: as the model already represents about half of the synapses impinging on the neurons, any larger cortical model will have only a limited increase in the number of synapses per neuron and can therefore be simulated by adding hardware resources,” write the researchers.

Getting the NEST software to run on SpiNNaker was part of the challenge. The network model was originally implemented in the native simulation language interpreter (SLI) of NEST. “To allow execution also on SpiNNaker and to unify the model description across back ends, we developed an alternative implementation in the simulator-independent language PyNN. On SpiNNaker, this works in conjunction with the sPyNNaker software,” they write.

Here’s a snapshot of the test platforms:

- HPC. NEST simulations are performed on a high-performance computing (HPC) cluster with 32 compute nodes. Each node is equipped with 2 Intel Xeon E5-2680v3 processors with a clock rate of 2.5 GHz, 128 GB RAM, 240 GB SSD local storage, and InfiniBand QDR (40 Gb/s). With 12 cores per processor and 2 hardware threads per core, the maximum number of threads per node using hyperthreading is 48. The cores can reduce and increase the clock rate (up to 3.3 GHz) in steps, depending on demand and thermal and power limits. Two Rack Power Distribution Units (PDUs) from Raritan (PX3-5530V) are used for power measurements. The HPC cluster uses the operating system CentOS 7.1 with Linux kernel 3.10.0. For memory allocation, we use jemalloc 4.1.0 in this study.

-

SpiNNaker chip SpiNNaker. The SpiNNaker simulations are performed using the 4.0.0 release of the software stack. The microcircuit model is simulated on a machine consisting of 6 SpiNN-5 SpiNNaker boards, using a total of 217 chips and 1934 ARM9 cores. Each board consists of 48 chips and each chip of 18 cores, resulting in a total of 288 chips and 5174 cores available for use. Of these, two cores are used on each chip for loading, retrieving results and simulation control. Of the remaining cores, only 1934 are used, as this is all that is required to simulate the number of neurons in the network with 80 neurons on each of the neuron cores.

“It is presently unclear which computer architecture is best suited to study whole-brain networks efficiently. The European Human Brain Project and Jülich Research Centre have performed extensive research to identify the best strategy for this highly complex problem. Today’s supercomputers require several minutes to simulate one second of real time, so studies on processes like learning, which take hours and days in real time are currently out of reach.” said Markus Diesmann, quoted in the HBP article, a co-author of the paper, and head of the Computational and Systems Neuroscience department at the Jülich Research Centre.

“There is a huge gap between the energy consumption of the brain and today’s supercomputers. Neuromorphic (brain-inspired) computing allows us to investigate how close we can get to the energy efficiency of the brain using electronics,” said Diesmann.

As always the devil is in the details and those are best gleaned from the paper. Simulation timing adjustments, for example, were needed. SpiNNaker achieves real-time performance for an integration time step of 1ms which “generally suffices” for applications in robotics and artificial neural networks, a “time step of 0.1ms” is typical for neuroscience applications.

The researchers are already looking ahead:

“As a consequence of the combination of required computation step size and large numbers of inputs, the simulation has to be slowed down compared to real time. In future, we will investigate the possibility of adding support for real-time performance with 0.1ms time steps. Reducing the number of neurons to be processed on each core, which we presently cannot set to fewer than 80, may contribute to faster simulation. More advanced software concepts using a synapse-centric approach open a new route for future work.”

Link to article: https://www.humanbrainproject.eu/en/follow-hbp/news/breakthrough-in-construction-of-computers-for-mimicking-human-brain/

Link to paper: https://www.frontiersin.org/articles/10.3389/fnins.2018.00291/full

[i]https://www.frontiersin.org/articles/10.3389/fnins.2018.00291/full

Performance Comparison of the Digital Neuromorphic Hardware SpiNNaker and the Neural Network Simulation Software NEST for a Full-Scale Cortical Microcircuit Model