Sept. 20, 2023 — In 2023, the Association for Computing Machinery will present its first-ever ACM Gordon Bell Prize for Climate Modelling during a special ceremony at SC23 this November in Denver. The award, which will be given annually for the next 10 years, aims to recognize the contributions of climate scientists and software engineers in addressing climate change. Award namesake Gordon Bell, a pioneer in high-performance and parallel computing, is providing the $10,000 award.

Specifically, the award will spotlight innovative parallel computing contributions based on performance and innovation in their computational methods and their contributions toward improving climate modeling and understanding the Earth’s climate system.

Specifically, the award will spotlight innovative parallel computing contributions based on performance and innovation in their computational methods and their contributions toward improving climate modeling and understanding the Earth’s climate system.

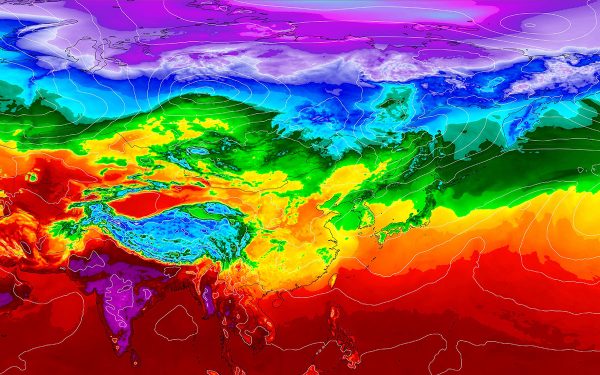

Today, climate modeling provides the most viable window to anticipate, and potentially reduce, the future impacts of climate change driven by increasing atmospheric greenhouse gas levels. By recognizing the scientific and computing contributions required to tackle worldwide climate change and respond to the need for more accurate, rapidly available climate information, the Gordon Bell Prize for Climate Modelling can be a means to elevate awareness about the consequences of climate change while showcasing the pioneering science and dedicated researchers working on this Grand Challenge problem.

Finalists for the award are determined by the work’s actual or potential influence on the realm of climate modeling, its interconnected disciplines, and broader societal impact. The use of HPC in the climate modeling applications is also considered. Based on these criteria, the Association for Computing Machinery (ACM) GBP for Climate Modelling Award Committee selected three finalists for the inaugural award.

FINALIST 1

The Simple Cloud-Resolving E3SM Atmosphere Model Running on the Frontier Exascale System

Authors: Mark Taylor, Peter M. Caldwell, Luca Bertagna, Conrad Clevenger, Aaron S. Donahue, James G. Foucar, Oksana Guba, Benjamin R. Hillman, Noel Keen, Jayesh Krishna, Matthew R. Norman, Sarat Sreepathi, Christopher R. Terai, James B. White III, Danqing Wu, Andrew G. Salinger, Renata B. McCoy, L. Ruby Leung, and David C. Bader

This work introduces an efficient and performance portable implementation of the Simple Cloud Resolving E3SM Atmosphere Model (SCREAM). SCREAM is a full-featured atmospheric global circulation cloud-resolving model. A significant advancement is SCREAM was developed anew using C++ and incorporates the Kokkos library to abstract the on-node execution model for both CPUs and GPUs. To date, only a few global atmosphere models have been ported to GPUs. SCREAM was able to run on both AMD and NVIDIA GPUs and on nearly an entire exascale system (Frontier). On the Frontier system, it achieved a groundbreaking performance, simulating 1.26 years per day for a practical cloud-resolving simulation. This constitutes a pivotal stride in climate modeling, offering enhanced and highly necessary predictions regarding the potential outcomes of future climate changes.

FINALIST 2

Establishing a Modeling System in 3-km Horizontal Resolution for Global Atmospheric Circulation Triggered by Submarine Volcanic Eruptions with 400 Billion Smoothed Particle Hydrodynamics

Authors: Shenghong Huang, Junshi Chen, Ziyu Zhang, Xiaoyu Hao, Jun Gu, Hong An, Chun Zhao, Yan Hu, Zhanming Wang, Longkui Chen, Yifan Luo, Jineng Yao, Yi ZhangSong, Yang Zhao, Zhihao Wang, Dongning Jia, Zhao Jin, Changming Song, Xisheng Luo, Xiaobin He, and Dexun Chen

This work presents a comprehensive simulation that captures the entire dynamic sequence of the Tonga eruption, spanning from the initial shock waves, earthquakes, and tsunamis to the subsequent development of mushroom clouds over the following 6-7 days. This simulation accounts for the dispersion and diffusion of ash and water vapor during the period. The modeling system was able to resolve the effects of the full coupling of the volcanic eruption, resulting seismic activity, and concurrent oceanic and atmospheric phenomena. In their paper, the team describes a novel modeling system designed for volcanic eruptions and atmosphere circulation. This system was run on a new Sunway supercomputer in China using a spatial resolution, ranging from 10 meters at local scale to 3 kilometers globally. The method incorporates an enhanced multimedium and multiphase smoothed particle hydrodynamics (SPH) model combined with a fully coupled meteorology-chemistry global atmospheric modeling scheme. The modeling system was able to use 400 billion particles with 80% parallel efficiency using 39 million processor cores. This marks a breakthrough in the ability to study interactions between tectonic processes and climate change. Furthermore, it provides the means to develop an early-warning simulation system capable of addressing similar global hazard events in the future.

FINALIST 3

Big Data Assimilation: Real-time 30-second-refresh Heavy Rain Forecast Using Fugaku During Tokyo Olympics and Paralympics

Authors: Takemasa Miyoshi, Arata Amemiya, Shigenori Otsuka, Yasumitsu Maejima, James Taylor, Takumi Honda, Hirofumi Tomita, Seiya Nishizawa, Kenta Sueki, Tsuyoshi Yamaura, Yutaka Ishikawa, Shinsuke Satoh, Tomoo Ushio, Kana Koike, and Atsuya Uno

The work presents a real-time 30-second-refresh numerical weather prediction (NWP), performed via exclusive use of 11,580 nodes (approximately 7% of the total) of the Fugaku supercomputer during the 2021 Tokyo Olympics and Paralympics. A total of 75,248 forecasts were disseminated in the one-month period with time-to-solution in less than three minutes for each 30-minute forecast. Japan’s Big Data Assimilation (BDA) project developed the novel NWP system for precise prediction of hazardous rains as a step toward solving the global climate crisis, where hazardous rain events are likely to increase with global warming. To achieve the required time-to-solution for a real-time 30-second refresh with high accuracy, the core BDA software incorporates single precision and enhanced parallel input/output (I/O) with tailored configurations involving 1,000 ensemble members of a 500-meter resolution weather model. Compared to the conventional one-hour refresh systems used by the weather bureaus, the BDA system not only showcased a two-orders-of magnitude increase in problem size, but it also revealed the effectiveness of 30-second refresh for highly nonlinear and rapidly evolving convective rainstorms. This endeavor stands as a testament to the value of engaging advanced computational methodologies to advance understanding of intricate meteorological phenomena.

Source: John Taylor, SC23