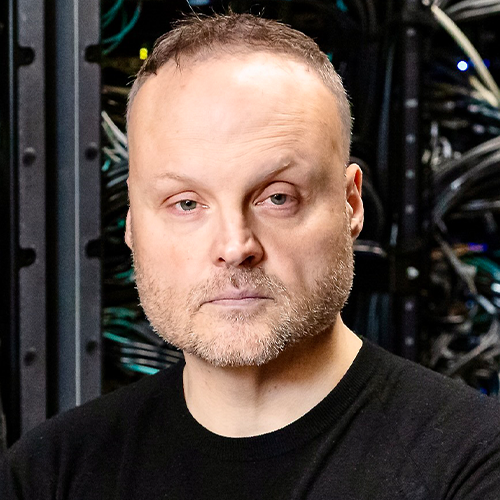

Sven Breuner

International Field CTO, VAST Data

Congratulations on being named a 2022 HPCwire Person to Watch! What is at the top of your professional to-do list for 2022? What are your goals for VAST Data?

Thank you for this honor!

Without a doubt, this will be a year of many interesting new developments. AI will of course be a major part of what makes the year interesting, as we only saw the tip of the iceberg of possibilities so far. But applying small-scale AI experiments to much bigger production datasets is still a process that can easily fail if the infrastructure is not carefully prepared for this. Working closely with customers on understanding their ideas, finding out what it takes to transition these ideas into production, and then actually seeing the plan coming together in the end is the part that I enjoy most. It’s also the part where my HPC background helps a lot.

When I joined VAST Data about a year ago, it was already selected for many large-scale deployments as the most suitable storage solution for AI, based on the fact that it made all-flash affordable at multi-petabyte scale, while providing access through standard file and object protocols. But in my opinion, it’s also the team’s willingness to work closely with the customers and to understand their full workflow, which makes VAST a trusted solution for large scale deployments. Thus, maintaining this spirit as we continue to significantly grow the field team this year, is one of my main goals for VAST.

What do the next 12-months have in store for VAST?

We just closed the third year of sales at a run rate of nearly $300 million, which fortunately gives us the full freedom and capacity to continue implementing the vision that we built based on customer feedback and predictable market trends – because we’re really only just getting started.

There will be announcements with more details throughput the year, but as a sneak preview: From the uniquely scalable file and object storage platform that we initially built, it’s now time to start moving up the stack by integrating more layers around database-like features and around seamlessly connecting geo-distributed datacenters.

Also, in the context of NVIDIA’s March GTC conference, we recently announced a new hardware platform which we named “Ceres”. One aspect that makes this platform interesting is that it’s built around NVIDIA’s BlueField SmartNICs instead of classic server CPUs.

How have HPC storage needs changed over time? What are the current challenges?

When I joined the Fraunhofer Center for HPC back in 2005, where I designed BeeGFS, I started by interviewing many of my colleagues to find out what they expected from a new parallel file system. Based on that, I can say that the essential user demands have been the same all the time: Fast access to large and small files, sequential and random. And while I still found a lot of room for improvement on the file system software side, the fundamental problem was just that the software was always bound by the capabilities of spinning disks, which are just inherently slow for small non-sequential accesses.

Consequently, the users had to spend a lot of time and effort on trying to rewrite their applications into doing less random and less concurrent storage access. This was necessary to improve the application runtime with spinning disks, but actually it severely limited the possibilities to evolve HPC applications through new algorithms.

Interestingly, this somehow seems to have created the impression over the years that HPC is primarily about streaming I/O, but that’s not the case. Some very significant advancements in HPC become possible when the file systems are no longer limited by spinning disks and when the users understand that this not only makes their current workload run faster, but finally enables them to no longer worry about random access patterns.

You created elbencho in 2020 as a modern HPC and AI storage benchmark. What needs does this benchmark meet and how is the project going?

Elbencho is essentially a multi-protocol storage benchmark tool, which can be used to test block devices, file systems and object stores, either from a single client or coordinated from multiple clients. It was also the first open-source tool to support NVIDIA’s GPUDirect Storage access method to more efficiently load data into GPU memory.

The latter is what makes it so interesting for AI and especially Deep Learning storage performance tests. Its shared file system testing capabilities make it suitable to test HPC storage systems. And its S3 object storage support has helped people evaluate modern alternatives for their aging Hadoop-based big data analytics infrastructures.

With all this together, I hope that it’s fair to call it a swiss army knife for storage benchmarking, which contains contributions from several other people and which I still keep extending continuously with new capabilities.

As a side note: I sometimes get asked by HPC community members how elbencho compares to io500. While io500 is a suite of predefined test cases (including tests for the creation and deletion of lots of empty files, which I think many people are not aware of and which I think is of limited practical relevance), my goal for elbencho was to let the user decide which test cases are relevant instead of predefining test cases.

Are you still involved in BeeGFS?

One day job is enough for me, so I’m exclusively with VAST and no longer involved in BeeGFS nowadays. But as the creator of BeeGFS, I’m of course glad to see its wide-spread use and was also happy to see that it has won several HPCwire awards over the last years.

Where do you see HPC headed? What trends – and in particular emerging trends – do you find most notable? Any areas you are concerned about, or identify as in need of more attention/investment?

Every now and then I see statements popping up about more specialized systems being the key to getting HPC efficiency to the next level, which concerns me. While it’s certainly true that well-defined workloads can benefit from specialized hardware, I think that especially in scientific HPC it is more appropriate to provide an infrastructure that works well without a lot of fine tuning.

Ultimately, the researchers’ ability to quickly test different approaches (e.g. variants of an algorithm) would be severely limited if each new variant would require a lot of fine-tuning for the particular platform. Thus, in my opinion any new HPC technology should always be evaluated under the aspect of how flexible the users will be and how easily they can take advantage of it.

I am biased towards storage, where the industry fortunately brought up flash as a technology that is very much forgiving for non-optimal access patterns and thus makes it easy for researchers to experiment. But I hope that the scientists can benefit from similar flexibility at all levels of the HPC stack in the future.

What inspired you to pursue a career in STEM and what advice would you give to young people wishing to follow in your footsteps?

Just do what makes you happy and don’t be afraid to make mistakes, because mistakes are part of the fun 🙂

Outside of the professional sphere, what can you tell us about yourself – unique hobbies, favorite places, etc.? Is there anything about you your colleagues might be surprised to learn?

The first 15 years of my life, I grew up on the small German island “Borkum” in the North Sea. Maybe that’s the reason for my wanderlust. I love to explore different parts of the world, especially the seaside for snorkeling, so Australia and the south pacific islands are great places for my wife and me. As a child I also learned horse-back riding, which I’m still enjoying today as another great way to explore new territories.