Announced earlier this year, the pan-European cloud computing project, Helix Nebula – the Science Cloud, brings together select IT service providers with three leading research institutions, CERN, the European Space Agency (ESA), and the European Molecular Biology Laboratory (EMBL). The two-year pilot phase currently underway supports the exciting Higgs boson search (discovery!), as well as molecular biology and earth observation science. In preparation for their upcoming keynote at the ISC Cloud conference, set to take place this September in Mannheim, Germany, representatives from all three centers share their perspectives on the initiative and provide an outline of what’s to come.

On September 24 – 25, 2012, cloud computing experts and end users from around the world will gather at the ISC Cloud’12 Conference at the Dorint Hotel in Mannheim, Germany. The event will focus on compute intensive and big data applications, their resource needs in the cloud, and strategies for implementing and deploying cloud infrastructures.

On September 24 – 25, 2012, cloud computing experts and end users from around the world will gather at the ISC Cloud’12 Conference at the Dorint Hotel in Mannheim, Germany. The event will focus on compute intensive and big data applications, their resource needs in the cloud, and strategies for implementing and deploying cloud infrastructures.

ISC Cloud Conference Chairman Wolfgang Gentzsch spoke with the Keynote Session presenters Bob Jones from CERN in Geneva, Rupert Lueck from EMBL in Heidelberg, and Wolfgang Lengert from ESA in Rome, about their joint keynote topic, the Helix Nebula Science Cloud.

HPC in the Cloud: For more than a decade CERN has been continuously developing and refining its distributed IT infrastructure for evaluating the events from the Large Hadron Collider particle physics experiment, what we have called the Worldwide LHC Grid. Now CERN has co-initiated Helix Nebula – the Science Cloud. How is this different from previous CERN infrastructures?

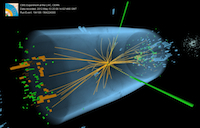

Bob Jones: The very successful Worldwide LHC Computing Grid that has been essential to the LHC experiments’ work to observe a particle consistent with long-sought Higgs boson (see recent CERN press release) consists of publicly-managed data centres. Helix Nebula is a public-private partnership where public research organisations, such as CERN, are running their scientific applications on commercially-managed cloud services.

Bob Jones: The very successful Worldwide LHC Computing Grid that has been essential to the LHC experiments’ work to observe a particle consistent with long-sought Higgs boson (see recent CERN press release) consists of publicly-managed data centres. Helix Nebula is a public-private partnership where public research organisations, such as CERN, are running their scientific applications on commercially-managed cloud services.

HPC in the Cloud: How will ESA benefit from the Helix Nebula infrastructure?

Wolfgang Lengert: This infrastructure will be used as a science exploitation platform, initially focusing on research of Earthquakes and Volcanoes supporting GEO (http://www.earthobservations.org) in the social benefit area of natural disasters. This exploitation platform, hosted on the Helix Nebula infrastructure, will provide easy access to massive data (initially mainly ESA EO data but also in-situ), tools (open source and commercial), models, and a collaboration platform, allowing an utmost exploitation of ESA space and other data advancing research in a cross-disciplinary fashion. It will enhance the exploitation of ESA’s historical missions ERS / Envisat with their 20 years continuous data set observing the Oceans, Land, Cryosphere and Atmosphere, which based on the large data volume requires to have the processing capacities and tools right next to the data.

HPC in the Cloud: How will EMBL benefit from the Helix Nebula infrastructure?

Rupert Lueck: EMBL builds on Helix Nebula to develop a portal for cloud-supported large-scale genome analysis. The analysis of large and complex genomes will allow a deeper insight into evolution and biodiversity across a range of organisms. However, it involves vast amounts of sequence data, which scientists generate within a few days. This poses a challenge for many labs as data management and analysis require powerful high performance IT infrastructures as well as bioinformatics expertise. EMBL’s novel cloud based whole genome assembly and annotation pipeline implements key knowledge from data production, bioinformatics and IT teams at EMBL Headquarters in Heidelberg and EMBL’s European Bioinformatics Institute in Hinxton, UK. It will allow scientists, at EMBL and around the world, to meet the data challenge by providing the right infrastructure on demand.

Using Helix Nebula EMBL also wants to explore the application of cloud computing benefits to other key research technologies such as high-throughput microscopy, which poses yet another big data challenge for the institute.

Beyond basic research EMBL’s large-scale genomic analysis platform could certainly attract and might be further adapted to research in the medical field or the pharma and agricultural industries. The cloud computing model certainly provides the power and scalability to make this service available to the widest possible user base.

HPC in the Cloud: What are the roles of other partners in the project?

Wolfgang Lengert: The other partners include commercial cloud service providers, which are assembling the infrastructure, and SMEs that specialise in developing and integrating cloud-based services.

HPC in the Cloud: Is Helix Nebula an infrastructure for research, or will industry participate in some way?

Rupert Lueck: The infrastructure being assembled for Helix Nebula is owned and operated by industry.

HPC in the Coud: How would you anticipate Helix Nebula to be in five years from now?

Bob Jones: Assuming the pilot phase is successful, we expect Helix Nebula to grow to include more commercial cloud services providers and public organisations as consumers. The vision and the goals are outlined in the strategic plan [PDF].

More information about the event where Bob, Rupert and Wolfgang are set to discuss these topics in more detail can be found at the ISC Cloud ’12 website.