As we get ready to launch the newest member of the Intel Xeon Phi family, code named Knights Landing, it is natural that there be some questions and potentially some confusion.

I have found that everything is clear, when we really understand that Knights Landing is an Intel processor. That makes it NOT Knights Corner. That makes it NOT a GPU. That makes it NOT a PCIe limited accelerator. That makes it NOT force large, new, and unique investments in software programming.

Perhaps everything is most clear when we discuss what it is and what it is not.

Knights Landing is NOT Knights Corner

Knights Corner, the first Intel Xeon Phi product, was a coprocessor. Knights Corner has been extraordinarily successful powering many of the world’s fastest computers. Nevertheless, Knights Corner required a hot processor, shuffling of data over the PCIe bus, and often the use of offload-style programming due to limited memory capacity and strong Amdahl’s Law effects when running less parallel code.

Knights Landing, as a processor, is a very easy upgrade for a Knights Corner user. Applications that used Knights Corner run even better on Knights Landing. Even bigger news is this: applications which were never able to adapt to the limitations of Knights Corner (or offloading to GPUs for that matter) will find Knights Landing an exciting option.

Knights Landing is NOT a GPU (neither was Knights Corner)

Knights Landing is a full-fledged, highly scalable, Intel processor. This processor can reach unprecedented levels of performance and parallelism, without giving up programmability. You can use the same parallel programming models, the same tools, and the same binaries that run today on other Intel processors.

Programming languages that work for processors, just work for Knights Landing too. Programming models, like OpenMP, MPI and TBB, just work for Knights Landing also.

Restrictive models tailored for GPUs, including kernel programming in CUDA and OpenCL, do not apply to processors (and I’m not talking just about Intel processors). We do not need them, because we have the full richness and portability of processor programming models fully available on Knights Landing.

Knights Landing is NOT going to invalidate prior processor coding efforts

It’s Knights Landing that really brings us home. It’s a full processor from Intel, one that happens to have up to 72 cores. It has an unprecedented ability to perform on highly parallel programs while being compatible with the tools and programming models common to Intel processors.

One of the first things I did when I initially logged on to a Knights Landing machine was to type in “yum install emacs.” I’m sure that whoever built that emacs binary had never heard of Knights Landing. It worked and I was happy to have the power of emacs so as to no be slowed by the primitive “vi.” I am so happy that software just runs, without a recompilation needed. No need to do something weird with Knights Landing to use it with your favorite software. It’s just like any other processor from Intel in that respect! It can run anything you would expect a processor to run: C, C++, Fortran, Python, and much more. It really is a full processor!

We think that parallel programming is challenging enough. That’s why we took a different approach compared to other device designs – especially GPUs. Our goal has been to deliver never before attainable processor performance while remaining compatible with existing software and tools. It’s quite an accomplishment.

Knights Landing is NOT inflexible

When we are considering the design for a new computer, we ask a variety of basic questions, consider options, make choices, and bake a set of choices into a design. In the past, when the topic of using high bandwidth memory came up, there as always a debate: should we make it a cache or should we make it a scratchpad memory? And that, of course, depends to a certain extent on whether your application is cache-friendly – and most are – or if it’s one of those apps that is not cache-friendly and you think you can do better with scratch pad memory. Previously, we generally had to design the computer choosing one approach or the other and then live with the decision. With Knights Landing, we offer choices which make Knights Landing amazingly versatile.

Knights Landing integrates high bandwidth memory known as MCDRAM which greatly enhances performance.

Unprecedented configurability allows it to be operated in different “memory modes.” MCDRAM can either be treated as a high bandwidth memory-side cache, or it can be identified as high bandwidth memory, or a little of each. Knights Landing also supports different “cluster modes,” allowing it to behave as a cluster with one, two or four NUMA domains.

As Jim Jeffers and I say in our book on Knights Landing, “Knights Landing offers an unprecedented variety of configurations which have traditionally been available only as hardwired and unchangeable design decisions. Specifically, the choices realized by the cluster modes and the memory modes. This wide ranging support allows Knights Landing to act like very different machines based on the configuration used to initialize the CPU, the operating system, and then the applications.” This means that Knights Landing can be adapted to fit application needs.

Knights Landing is NOT limited by small memory and offloading

Knights Landing processors support up to 384 GB DDR using 6 channels (~90GBs sustained bandwidth) memory and do not require applying offload constructs to hot spots because an entire application will run on the processor itself.

Consider the weather forecasting program called WRF (Weather Research and Forecasting). It does not have just a few hot spots where it does all its computations – instead it has a huge number of algorithms used to solve different problems. There are many parts of the application that you would like to run very fast, especially the particularly complex algorithms. Since it all runs on Knights Landing, we’ve seen very nice results, which I have documented in chapter 22 of the new Knights Landing book, coupled with the ease of using the same code as we would on any processor. Programs like this are essentially an insurmountable challenge for a GPU or coprocessor.

Machine learning and data analytics will receive a boost from the introduction of Knights Landing2 . Both tend to apply computational models to large datasets – the constraints have always been the amount of data you can handle given the computational power available to you. Knights Landing is a highly scalable, highly parallel device that is well suited to handle large, complex computations. Because it is a processor rather than a coprocessor, the Intel Xeon Phi technology provides you with more access to your data. Best of all you are working with an on-package, very large processor-sized memory without the limits of any offload device (coprocessor or GPUs).

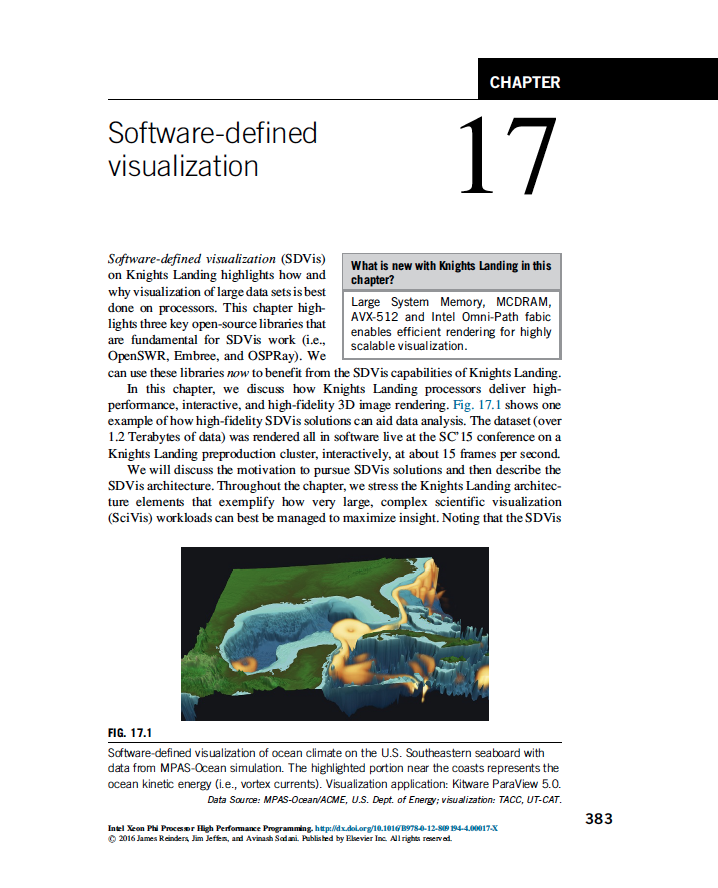

The same holds true for visualization applications – Knights Landing provides a new level of flexibility for these kinds of highly specialized, data intensive workloads. Many people are surprised that Knights Landing can consistently beat the leading GPUs in visualization benchmarks 1 . But this is really not surprising when you consider that a GPU has a hard coded graphics pipeline, which is quite inflexible. Knights Landing, being a processor, has none of those constraints. Plus, you don’t wind up shipping massive amounts of data across the PCIe bus; the data is stored in on-package memory and is available for immediate processing.

Moving Toward Exascale

I think we can safely predict a long and happy life for the evolving Intel Xeon Phi processor family, which includes Knights Landing and all its descendants. Odds are that these next generation processors will play a major role in meeting one of HPC’s most exciting grand challenges – the realization of exascale.

Los Alamos National Laboratory’s Trinity supercomputer and the Cori supercomputer from NERSC are pre-exascale systems that will be operational in 2016. Both are powered by Knights Landing and are proof that double-digit petascale performance and the development of exascale machines are attainable without the use of attached accelerators or coprocessors.

And that’s why we emphasize that Knights Landing is a processor – a full-featured, extraordinarily powerful, highly parallel CPU – not a coprocessor or accelerator. It’s a major milestone on the road to exascale and an exciting new era in the world of high performance computing.

1 Intel Xeon Phi Processor High Performance Programming Knights Landing Edition, chapter 17, Software-defined Visualization

2 Intel Xeon Phi Processor High Performance Programming Knights Landing Edition, chapter 24, Machine Learning

—

All figures are reproduced with permission from Intel Xeon Phi Processor High Performance Programming, Knights Landing Edition by James Reinders, Jim Jeffers and Avinash Sodani, copyright 2016, published by Morgan Kaufmann, ISBN 978-0-12-809194-4. Figures are available for download at http://lotsofcores.com/KNLbook.