PGI today announced a fairly lengthy list of new features to version 17.7 of its 2017 Compilers and Tools. The centerpiece of the additions is support for the Tesla Volta 100 GPU, Nvidia’s newest flagship silicon announced in April and now shipping to customers.

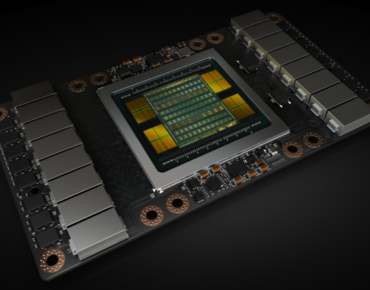

The Volta V100, developed at a cost of $3 billion, is a giant chip, 33 percent larger than the Pascal P100 and once again “the biggest GPU ever made.” Fabricated by TSMC on a custom 12-nm FFN high performance manufacturing process, the V100 GPU squeezes 21.1 billion transistors and almost 100 billion via connectors on an 815 mm2 die, about the size of the Apple watch, (See HPCwire articles, Nvidia’s Mammoth Volta GPU Aims High for AI, HPC and First Volta-based Nvidia DGX Systems Ship to Boston-based Healthcare Providers)

Overall, says PGI, the new features in version 17.7 will help deliver improved performance and programming simplicity to high-performance computing (HPC) developers who target multicore CPUs and heterogeneous GPU-accelerated systems.

“We’re seeing a 1.5-2x performance improvement in OpenACC programs compiled with PGI 17.7 on Volta compared to Pascal,” said Michael Wolfe of Nvidia’s PGI compilers & tools group. “The support in PGI 17.7 for OpenACC with CUDA Unified Memory simplifies initial porting of applications to GPUs, the improved data handling for C++14 lambdas in OpenACC is really important to many C++ programmers, and the PGI Unified Binary for OpenACC to target both multicore CPUs and GPUs is a great new feature for ISVs that need to deliver a single GPU-accelerated binary to all of their customers.”

In this latest release, PGI OpenACC and CUDA Fortran now support Volta GV100 GPU, “offering more memory bandwidth, more streaming multiprocessors, next-generation Nvidia NVLink and new microarchitectural features that add up to better performance and programmability” according to PGI which is owned by Nvidia.

PGI 17.7 compilers also now leverage CUDA Unified Memory to simplify OpenACC programming on GPU-accelerated systems. When OpenACC allocatable data is placed in CUDA Unified Memory using a simple compiler option, no explicit data movement code or directives are needed.

Other key new features of the PGI 17.7 Compilers & Tools include:

- OpenMP 4.5 for Multicore CPUs– Initial support for OpenMP 4.5 syntax and features allows the compilation of most OpenMP 4.5 programs for parallel execution across all the cores of a multicore CPU system. TARGET regions are implemented with default support for the multicore host as the target, and PARALLEL and DISTRIBUTE loops are parallelized across all OpenMP threads.

- Automatic Deep Copy of Fortran Derived Types– Movement of aggregate, or deeply nested Fortran data objects between CPU host and GPU device memory, including traversal and management of pointer-based objects, is now supported using OpenACC directives.

- C++ Enhancements– The PGI 17.7 C++ compiler includes incremental C++17 features, and is supported as a CUDA 9.0 NVCC host compiler. It delivers an average 20 percent performance improvement on the LCALS loops benchmarks.

- Use C++14 Lambdas with Capture in OpenACC Regions– C++ lambda expressions provide a convenient way to define anonymous function objects at the location where they are invoked or passed as arguments. Starting with the PGI 17.7 release, lambdas are supported in OpenACC compute regions in C++ programs, for example to drive code generation customized to different programming models or platforms. C++14 opens doors for more lambda use cases, especially for polymorphic lambdas. Those capabilities are now usable in OpenACC programs.

- Interoperability with the cuSOLVER Library– call optimized cuSolverDN routines from CUDA Fortran and OpenACC Fortran, C and C++ using the PGI-supplied interface module and the PGI-compiled version of the cuSOLVER library bundled with PGI 17.7.

- PGI Unified Binary for NVIDIA Tesla and Multicore CPUs– use OpenACC to build applications for both GPU acceleration and parallel execution on multicore CPUs. When run on a GPU-enabled system, OpenACC regions offload and execute on the GPU. When run on a system without GPUs installed, OpenACC regions execute in parallel across all CPU cores in the system.

- New Profiling features for CUDA Unified Memory and OpenACC– The PGI 17.7 Profiler adds new OpenACC profiling features including support on multicore CPUs with or without attached GPUs, and a new summary view that shows time spent in each OpenACC construct. New CUDA Unified Memory features include correlating CPU page faults with the source code lines where the associated data was allocated, support for new CUDA Unified Memory page thrashing, throttling and remote map events, NVLink support and more.

Other features and enhancements of PGI 17.7 include comprehensive support for environment modules on all supported platforms, prebuilt versions of popular open source libraries and applications, and new “Introduction to Parallel Computing with OpenACC” video tutorial series. PGI 17.7 is available for download today from the PGI website to all PGI Professional customers with active maintenance.

PGI includes high-performance parallel Fortran, C and C++ compilers and tools for x86-64 and OpenPOWER CPU processor-based systems and NVIDIA Tesla GPU Accelerators running Linux, Microsoft Windows or Apple macOS operating systems.

Link to complete list of PGI 17.7 features: http://www.pgicompilers.com/products/new-in-pgi.htm