The Green500 list, which ranks the most energy-efficient supercomputers in the world, has virtually always faced an uphill battle. As Wu Feng – custodian of the Green500 list and an associate professor at Virginia Tech – tells it, “noone” cared about energy efficiency in the early 2000s, when the seeds of the Green500 were being planted. Nevertheless, in 2007, the Green500 launched its first list, ranking the participating supercomputers by their flops per watt. 29 lists and nearly 15 years later, the Green500 organizers (now having joined forces with the Top500) continue to use the list to advocate for a future for supercomputing that treats energy efficiency as a first-order concern, even as organizations hesitate to put their increasingly large systems through the efficiency gauntlet. At ISC 2021, Feng discussed the state of the Green500 and the supercomputers it tracks.

A more ambitious (and more challenging) reporting landscape

Over the years, the Green500 has developed three levels of energy efficiency reporting: levels one, two and three. Level one submissions (which, when the Green500 launched, were all submissions) have the most forgiving requirements, allowing system operators to assess efficiency using a small subset of the system during just its core phase, with further leniency allowed in meter accuracy.

Over the years, the Green500 has developed three levels of energy efficiency reporting: levels one, two and three. Level one submissions (which, when the Green500 launched, were all submissions) have the most forgiving requirements, allowing system operators to assess efficiency using a small subset of the system during just its core phase, with further leniency allowed in meter accuracy.

The other two levels are intended to push system operators toward more accurate reporting and more active consideration of energy efficiency. Level two submissions must use a larger portion of the system – including all its subsystems – and measure the average power of a full run with higher accuracy, while level three submissions assess the efficiency of the entire system and its energy use over a full run with exacting precision (<=0.5%).

Feng said that making a level two or three report comes with advantages: system operators will then have access to more accurate information, allowing them to leverage that knowledge and data in system operations (or even procurement). Furthermore, some of them had reported that the barrier to entry for a level three report was relatively low.

Reporting at different levels also has a small correlation with the values reported, Feng said: “Usually, the level one reading for flops per watt will be a smidgen higher than for level two and level three.” Comparing the Green500 reporting to miles-per-gallon ratings for a car, he said that “instead of 29 miles per gallon and 22 miles per gallon highway and city, it might be 28.96 miles per gallon and 21.96 miles per gallon … There’s higher correlation between level two and level three than [there is for] level two and level three relative to level one, but they’re all still pretty close.”

But this push for greater accuracy, of course, has its impediments: for instance, Feng explained, a level three run might indeed pose little difficulty for an organization – “but only if you have the appropriate infrastructure in place – otherwise, it can be very difficult to do.”

“[For a level three run,] you have to have the entire supercomputer instrumented – all the nodes,” Feng told HPCwire. For increasingly massive systems, the number of meters required for a level three report can span into the thousands. If you have a massive system without the appropriate instrumentation, making a more accurate submission to the Green500 can be a very tall order. (Nvidia, for instance, had been unable to make level three submissions.)

As a result, despite level two and three reporting offering more accuracy – and more benefits for system operators – it is unfeasible to require those higher standards, particularly considering that even a level one report is optional for submission to the Top500, despite the lists joining forces five years ago. (Feng did say that one proposal had suggested that the organizers only award Green500 systems if they had made a level two or higher report.) “We started with level one mainly to try and get people to start reporting,” he said. “Because if the barrier to entry is that you have to do a level three measurement, we would have even fewer entries in the Green500.”

But beyond the push for level two and three reporting, the broader Green500 list faces some general resistance. “Just doing a Top500 run is labor-intensive, and then we’re adding onto it the measurement of power,” Feng said. “So it’s hard for a lot of these institutions to be commandeering, if you will, their supercomputers for such a long period of time.”

Furthermore, Feng explained that many Top500 systems choose not to report to the Green500 because they know or suspect that their energy efficiency rating will not be competitive. “By and large, most of the measurements [on the Green500 list] are of the supercomputers that are more energy efficient,” he said. “You see a smattering of ones farther down … but that’s probably because they were higher up earlier on and then they just kept reporting the number.”

These tendencies have created a dichotomous Green500 list that exhibits both promising and worrying reporting trends. “We had a high of approximately 350 submissions early on when everything was level one,” Feng said. “[But] the level of reporting has come down somewhat.” Indeed, the most recent edition had 181 submissions – a far cry from that high water mark, which Feng said was largely fueled by IBM’s strong interest and investment in energy efficiency.

On the other hand, the average reporting quality of the respondents is going up, as are the raw numbers of respondents choosing level two and three reporting. The 29 level two submissions – more than 16 percent of overall submissions – are at least a four-year high, as are the 14 level three submissions. The higher-tier levels are also overrepresented among the most efficient supercomputers, with only five of the Green500’s top ten using level one reporting.

Increasing efficiency, especially at the high end

Trends in the systems themselves are promising, as well: systems are broadly approaching 30-gigaflops-per-watt efficiency, with heterogeneous systems “really delivering and driving on the energy efficiency front” and accelerators dominating the list. These trends are accentuated in the higher echelons of system efficiency: “The median has been improving steadily,” Feng said, “[but] it’s the top end of the list that’s been improving much more rapidly.” The Green500’s top ten also showed a strong 60 percent turnover from November’s list, drawing a sharp contrast to the relatively stable Top500 list it accompanied.

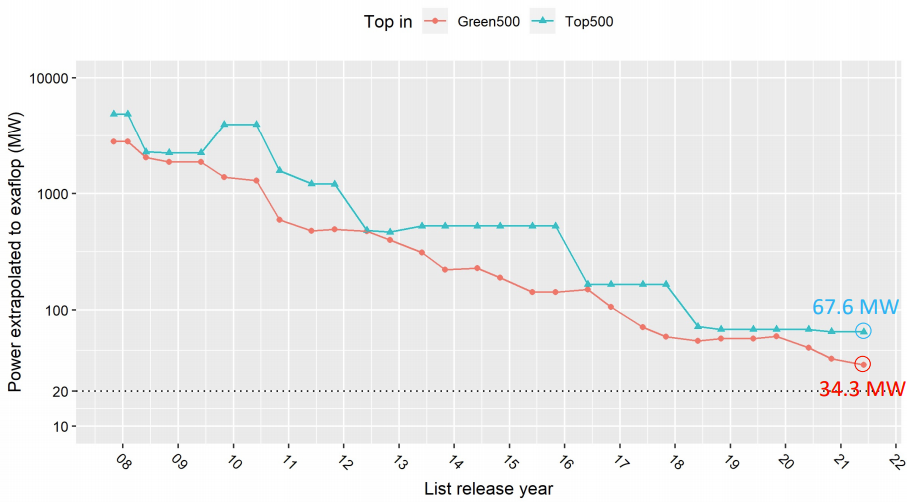

Despite that strong turnover, Feng remains bearish about the ambitious 20MW energy efficiency goal that has long loomed over U.S. plans for exascale supercomputing. Throughout his talk, Feng extrapolated from various trends to an exaflops: the imminent 30-gigaflops-per-watt efficiency of the Green500, for instance, “linearly extrapolates to an exascale computer in approximately 33MW”; based on the top system on the Top500, the extrapolation was closer to 68MW. Whether any of the three planned U.S. exascale systems will hit the 20MW (50-gigaflops-per-watt) target remains a subject of debate in the broader community, of course – and for more on that, read HPCwire’s feature on the Frontier system.

Now, Feng and the Green500 press on, working to push the envelope of energy efficiency in a supercomputing status quo that regularly pushes into tens of megawatts per system.

“The goal of the list is we seek to raise awareness and encourage energy efficiency of supercomputers, and we seek to drive energy efficiency as a first-order design constraint that is on par with performance,” Feng said. Later, he added: “The problem is that … frankly, here in the United States, we’re in a society that is all about performance.”

To view the full June 2021 Green500 list, click here.