The integration of quantum computing into high-performance computing (HPC) centers is a topic of growing interest and urgency. As quantum computing matures, the question is no longer just about its theoretical capabilities but also its practical applicability in real-world computing environments. In fact, many organizations that are shopping for quantum computers require them to be “HPC-ready”, looking for quantum solutions that are not just powerful but also synergistic with existing HPC infrastructures.

But what does “HPC-ready” mean? Being “HPC-ready” encapsulates a multitude of factors that make a quantum computer not just powerful, but also compatible, reliable, and efficient within an HPC ecosystem. This article unpacks what it means for a quantum computer to be truly “HPC-ready,” focusing on the physical attributes of the quantum computer, the software stack, the ability to execute hybrid (classical/quantum) algorithms, and the key administrative functions of system monitoring and management.

Physical Attributes

The physical dimensions of a quantum computer must align with the existing infrastructure of an HPC center. Unlike classical computers, which have become increasingly compact, some quantum computers can be quite bulky. Ensuring that the quantum hardware fits within the designated space is the first step toward HPC readiness.

Certain quantum computers, such as those utilizing superconducting qubits, operate at extremely low temperatures to maintain quantum coherence. Specialized cooling systems like dilution refrigerators are required, which can be a logistical challenge. These cooling systems must be integrated into the existing cooling infrastructure of the data center, requiring careful planning and potentially significant modifications.

In a bit of good news, the typical power consumption of quantum computers is low in comparison with high-end HPC resources. Today’s quantum computing systems consume as little as 5 KW up to 25 KW, which is still substantially more efficient than their classical counterparts.

The Software Stack

Once the system can physically be installed and supported, attention should be paid to the software stack.

Application Programming Interfaces (APIs) and Software Development Kits (SDKs) are essential for developers to integrate quantum computing capabilities into existing applications. These should be robust, well-documented, and ideally, standardized to ensure that the quantum computer can easily ‘plug and play’ into the existing software environment. Since quantum computers are still a developing technology, there aren’t many experts in quantum computing software. As such, example programs and getting-started guides are crucial.

Middleware acts as the glue between the quantum computer and the classical HPC systems. It facilitates the execution of quantum algorithms, manages resources, and ensures that the quantum and classical systems can communicate effectively. Middleware solutions must be compatible with existing HPC software stacks.

Many HPC centers use SLURM (Simple Linux Utility for Resource Management) which serves as a robust job scheduler and resource manager. Key SLURM functions include job queuing and prioritization, virtualization, resource allocation with node selection and reservation capabilities, and intricate workload management through job arrays and task distribution. SLURM also offers real-time monitoring, reporting, access control and accounting features. As quantum computers will work alongside classical HPCs, a way to increase efficiency would be to split computational tasks between the HPC and quantum systems using SLURM. For optimal integration into such HPC environments, quantum computers should also possess a SLURM interface.

Quantum algorithms and quantum computers are complex, and thus it is valuable to have a flexible and open software stack that allows fine-grained controls over algorithms, the quantum circuits that implement them, the optimizers used to improve the circuits, and the pulses that drive the individual quantum bits.

Hybrid Classical/Quantum Algorithms

One of the most exciting developments in quantum computing is the rise of hybrid algorithms that leverage both classical and quantum resources. These algorithms often use classical systems for pre-processing and post-processing tasks, while the quantum computer handles the computationally intensive core calculations. Being HPC-ready means having the software infrastructure to support these hybrid algorithms efficiently.

Part of this software infrastructure is an orchestration layer to manage the workflow between classical and quantum computations, ensuring that tasks are allocated to the most suitable computing resources. This layer can also handle error correction and optimization, making the entire process more efficient and reliable.

An interesting approach is to add tightly-coupled GPUs to the quantum computer. While GPUs are extremely popular in classical HPC centers, adding a high-speed low-latency connection between the quantum computer and dedicated GPU resources opens new opportunities. GPUs can work in tandem with quantum computers to perform time-sensitive tasks such as error correction, while also executing hybrid algorithms.

Monitoring and Management

Real-time monitoring tools are crucial for keeping an eye on the health and performance of the quantum computer. These tools should integrate seamlessly with existing monitoring solutions in the HPC center. They should offer insights into resource utilization, error rates, and other key performance indicators (KPIs).

Some KPIs that are often used in quantum computing environments are:

- Execution Time – or Run Time: The time it takes for a quantum algorithm to run to completion is an important KPI. This can be compared against classical algorithms to gauge the efficiency gains achieved through quantum computation.

- Job Success Rate: Some quantum jobs fail, and it’s important to track these failures, notify the users and, if needed, automatically restart the jobs. Quantum systems often require frequent calibration – automatic or manual – and monitoring the success rate can help determine when calibration is in order.

- Queue Time: In an HPC environment, jobs often have to wait in a queue before being executed. Monitoring the queue time specifically for quantum jobs can help in optimizing resource allocation strategies.

- Resource Utilization: Just like in classical computing, understanding how well the computational resources are being utilized is essential

- System Uptime: Continuous operation without unplanned outages is a key requirement in HPC settings. System uptime metrics can help in assessing the reliability of the quantum computer within the HPC ecosystem.

- User Engagement Metrics: Understanding how often and for what purposes the quantum resources are being accessed can provide valuable information for future resource planning and system improvements.

An experienced HPC manager ensures that the center not only collects and owns this data but also employs the right analytics tools to transform this raw data into actionable insights.

Conclusion

Ultimately, the journey to make quantum computers HPC-ready is more than a technical quest; it’s a transformative endeavor that has the potential to redefine the boundaries of computational science. As we stand on the cusp of this new era, the roadmap to HPC readiness serves not just as a guide but as a testament to the collaborative spirit of innovation. It’s a call to action for quantum scientists, HPC experts, and software developers to unite their expertise and push the frontiers of what’s computationally possible. The stakes are high, but the rewards—unleashing the untapped potential of quantum computing in real-world applications—could be game-changing

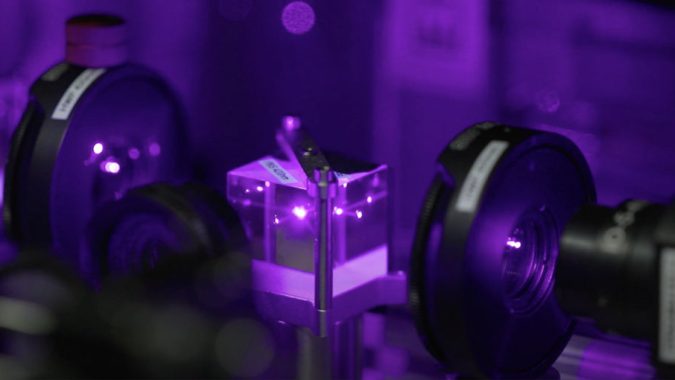

Top Image: Neutral atom based quantum computing platform from QuEra