Feb. 27, 2024 — Solar energy is one of the most promising, widely adopted renewable energy sources, but the solar cells that convert light into electricity remains a challenge. Scientists have turned to the High-Performance Computing Center Stuttgart to understand how strategically designing imperfections in the system could lead to more efficient energy conversion.

Since the turn of the century, Germany has made major strides in solar energy production. In 2000, the country generated less than one percent of its electricity with solar power, but by 2022, that figure had risen to roughly 11 percent. A combination of lucrative subsidies for homeowners and technological advances to bring down the costs of solar panels helped drive this growth.

With global conflicts making oil and natural gas markets less reliable, solar power stands to play an even larger role in helping meet Germany’s energy needs in the years to come. While solar technology has come a long way in the last quarter century, the solar cells in contemporary solar panels still only operate at about 22 percent efficiency on average.

In the interest of improving solar cell efficiency, a research team led by Prof. Wolf Gero Schmidt at the University of Paderborn has been using high-performance computing (HPC) resources at the High-Performance Computing Center Stuttgart (HLRS) to study how these cells convert light to electricity. Recently, the team has been using HLRS’s Hawk supercomputer to determine how designing certain strategic impurities in solar cells could improve performance.

“Our motivation on this is two-fold: at our institute in Paderborn, we have been working for quite some time on a methodology to describe microscopically the dynamics of optically excited materials, and we have published a number of pioneering papers about that topic in recent years,” Schmidt said. “But recently, we got a question from collaborators at the Helmholtz Zentrum Berlin who were asking us to help them understand at a fundamental level how these cells work, so we decided to use our method and see what we could do.”

Recently, the team used Hawk to simulate how excitons — a pairing of an optically exited electron and the electron “hole” it leaves behind — can be controlled and moved within solar cells so more energy is captured. In its research, the team made a surprising discovery: it found that certain defects to the system, introduced strategically, would improve exciton transfer rather than impede it. The team published its results in Physical Review Letters.

Designing Solar Cells for More Efficient Energy Conversion

Most solar cells, much like many modern electronics, are primarily made of silicon. After oxygen, it is the second most abundant chemical element on Earth in terms of mass. Around 15 percent of our entire planet consists of silicon, including 25.8 percent of the Earth’s crust. The basic material for climate-friendly energy production is therefore abundant and available almost everywhere.

However, this material does have certain drawbacks for capturing solar radiation and converting it into electricity. In traditional, silicon-based solar cells, light particles, called photons, transfer their energy to available electrons in the solar cell. The cell then uses those excited electrons to create an electrical current.

The problem? High-energy photons provide far more energy than what can be transformed into electricity by silicon. Violet light photons, for instance, have about three electron volts (eV) of energy, but silicon is only able to convert about 1.1 eV of that energy into electricity. The rest of the energy is lost as heat, which is both a missed opportunity for capturing additional energy and reduces solar cell performance and durability.

In recent years, scientists have started to look for ways to reroute or otherwise capture some of that excess energy. While several methods are being investigated, Schmidt’s team has focused on using a molecule-thin layer of tetracene, another organic semiconductor material, as the top layer of a solar cell.

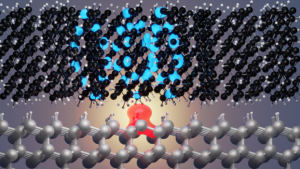

Unlike silicon, when tetracene receives a high-energy photon, it splits the resulting excitons into two lower-energy excitations in a process known as singlet fission. By placing a carefully designed interface layer between tetracene and silicon, the resulting low-energy excitons can be transferred from tetracene into silicon, where most of their energy can be converted into electricity.

Utility in Imperfection

Whether using tetracene or another material to augment traditional solar cells, researchers have focused on trying to design the perfect interface between constituent parts of a solar cell to provide the best-possible conditions for exciton transfer.

Schmidt and his team use ab initio molecular dynamics (AIMD) simulations to study how particles interact and move within a solar cell. With access to Hawk, the team is able to do computationally expensive calculations to observe how several hundred atoms and their electrons interact with one another. The team uses AIMD simulations to advance time at femtosecond intervals to understand how electrons interact with electron holes and other atoms in the system. Much like other researchers, the team sought to use its computational method to identify imperfections in the system and look for ways to improve on it.

In search of the perfect interface, they found a surprise: that an imperfect interface might be better for exciton transfer. In an atomic system, atoms that are not fully saturated, meaning they are not completely bonded to other atoms, have so-called “dangling bonds.” Researchers normally assume dangling bonds lead to inefficiencies in electronic interfaces, but in its AIMD simulations, the team found that silicon dangling bonds actually fostered additional exciton transfer across the interface.

“Defect always implies that there is some unwanted thing in a system, but that is not really true in our case,” said Prof. Uwe Gerstmann, a University of Paderborn professor and collaborator on the project. “In semiconductor physics, we have already strategically used defects that we call donors or acceptors, which help us build diodes and transistors. So strategically, defects can certainly help us build up new kinds of technologies.”

Dr. Marvin Krenz, a postdoctoral researcher at the University of Paderborn and lead author on the team’s paper, pointed out the contradiction in the team’s findings compared to the current state of solar cell research. “It is an interesting point for us that the current direction of the research was going toward designing ever-more perfect interfaces and to remove defects at all costs. Our paper might be interesting for the larger research community because it points out a different way to go when it comes to designing these systems,” he said.

Armed with this new insight, the team now plans to use its future computing power to design interfaces that are perfectly imperfect, so to speak. Knowing that silicon dangling bonds can help foster this exciton transfer, the team wants to use AIMD to reliably design an interface with improved exciton transfer. For the team, the goal is not to design the perfect solar cell overnight, but to continue to make subsequent generations of solar technology better.

“I feel confident that we will continue to gradually improve solar cell efficiency over time,” Schmidt said. “Over the last few decades, we have seen an average annual increase in efficiency of around 1% across the various solar cell architectures. Work such as the one we have carried out here suggests that further increases can be expected in the future. In principle, an increase in efficiency by a factor of 1.4 is possible through the consistent utilization of singlet fission.”

Source: Eric Gedenk, GCS