Google is officially in the Infrastructure-as-a-Service (IaaS) business. Its newly-launched utility computing service, Google Compute Engine, lets users run workloads inside big G’s massive datacenters, just like Amazon does with its Elastic Compute Cloud (EC2). Urs Hölzle, Google’s vice president of engineering and longtime employee, revealed the news last Thursday at the company’s annual developers conference. The compute service joins Google’s App Engine PaaS, which launched in 2008 and now supports over one million active apps and receives 7.5 billion hits per day.

Mirroring the Microsoft news from earlier in the month, Google simultaneously downshifted and expanded its cloud stack when it added an IaaS offering. The only surprise is that it didn’t happen sooner. Google’s uber-scale datacenters are perfect for a public infrastructure cloud of this ilk. Google said that it was the company’s success with App Engine and customer demand that led to the debut of an IaaS product.

Mirroring the Microsoft news from earlier in the month, Google simultaneously downshifted and expanded its cloud stack when it added an IaaS offering. The only surprise is that it didn’t happen sooner. Google’s uber-scale datacenters are perfect for a public infrastructure cloud of this ilk. Google said that it was the company’s success with App Engine and customer demand that led to the debut of an IaaS product.

While comparisons can be made to HP’s Cloud Service, Microsoft’s revamped Azure offering, and Amazon-runnerup Rackspace, the company with the biggest X on its back is none other than lead IaaS purveyor Amazon, which kicked off its EC2 service in 2006, two years ahead of Google’s App Engine. Google, though, is one of the few companies with the infrastructure scale and technical know-how to pose a threat to AWS dominance. Utility computing is a volume business, and Google is well-positioned to support that volume. With hindsight, you could say the Web-era poster child has spent the last decade preparing for this moment.

The new service lets users run Linux Virtual Machines (VMs) on the same infrastructure that powers Google. Currently, 1, 2, 4 and 8 virtual core VMs are available with 3.75 GB RAM per virtual core. Already partners are lining up. RightScale, Puppet Labs, Opscode, MapR, CliQr and Numerate have all announced integration with Google Compute Engine.

Google is emphasizing three qualities essential for a successful IaaS play: scale, performance and value. Google Product Manager Craig McLuckie spelled out how the company will drive each of these areas. As to value, McLuckie makes the bold claim that Google Compute Engine gives users 50 percent more compute power dollar-for-dollar than the competition, but does not say how he arrived at this figure.

While direct oranges-to-oranges comparisons are difficult to generate, that doesn’t stop analysts from trying. Google’s n1-standard-8-d Machine Type furnishes an 8-core VM with 30GB of RAM and 22 “Cloud Engine Units” at $1.16 per hour. The closest Amazon EC2 Instance Type is the High-Memory Double Extra Large Instance (m2.2xlarge) with 4 virtual cores, 34.2GB of RAM and 13 “Compute Units” at $.90 per hour. Keep in mind the Amazon setup offers 1.7x less CPU, and when you line them up based on $/unit/hour, Google comes out ahead (cheaper): $0.053 versus $0.069. Note that the GCEU (Google Cloud Engine Unit) is approximately equal to the EC2 Compute Unit, and Amazon prices are based on their least expensive region (US East – Virginia).

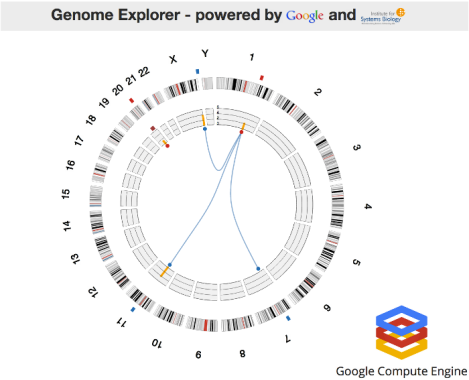

Circular representation of a human genome

While the public cloud can be used for a range of workloads, Google has suggested several primary use cases, including batch processing jobs, like video transcoding and rendering; data processing and analysis using Hadoop; and high-performance computing. Speaking of HPC, the service allows users to launch enormous compute clusters on the order of tens of thousands of cores and higher. In fact, during his keynote, Hölzle said that every single core could be put to a specific task — 771,886 cores at the time, and that number is set to grow.

To illustrate the power and scale of Google Compute Engine, the VP demonstrated the Cancer Regulome Explorer application used by the Institute for Systems Biology. While the app would normally take about 10 minutes to locate one match, using 10,000 Google processor cores (1,250 8-core VMs) allows a match to be made in mere seconds. Using the same number of cores, the entire computation can be completed in an hour rather than 15 hours.

“That is how infrastructure-as-a-service is supposed to work,” Hölzle said.

Taking it a step further, the VP showed that allocating about 600,000 cores to the same genomics app allowed for the discovery of multiple matches every few seconds. In order to take advantage of this many cores, the code was modified ahead of time using Compute Engine’s ExaCycle technology. Further details of Google’s work with the Institute for System Biology are outlined in this case study.

There’s no Google equivalent yet of an Amazon HPC instance type, but it’s likely only a matter of time. While Google’s offerings are less evolved than the Amazon cloud portfolio, they are not that far apart considering their relative ages.

Google Compute Engine joins the suite of services that comprise the Google Cloud Platform, which also includes Google App Engine, Google BigQuery, Google Cloud Storage, Google Cloud SQL and the Prediction API. These roughly mirror Amazon’s Web Services. For example, Google Cloud Storage is similar to Amazon’s Simple Storage Service (S3).

Google Compute Engine is currently available in “limited preview,” accessible here.