Ahead of ISC 2016, taking place in Frankfurt, Germany, next week, HPCwire reached out to Paul Messina to get an update on the deliverables and timeline for the United States’ Exascale Computing Project. The ten-year project has been charged with standing up at least two capable exascale supercomputers in 2023 as part of the larger National Strategic Computing Initiative (NSCI) launched by the Obama Administration in July 2015.

Earlier this year, Messina, senior strategic advisor and Distinguished Fellow at the U.S. Department of Energy’s Argonne National Laboratory, was selected to lead the project. He oversees a leadership team that includes staff from the six major participating DOE national laboratories: Argonne, Los Alamos, Lawrence Berkeley, Lawrence Livermore, Oak Ridge and Sandia. The program office for ECP is located at Oak Ridge.

“We’re focusing more on delivered performance than the number of FLOPS,” says Messina, recalling that in 1990s, breaking the petaflops barrier required four orders of magnitude speedup in floating point operations per second (FLOPS), ten thousand faster than what was the state of the art at the point. “Now we’re focusing on exascale not exaflops,” Messina adds.

The threshold for a “capable exascale” machine is not as well defined as a FLOPS-based target. Department of Energy (DOE) documents for the coming next-generation CORAL systems – Summit at Oak Ridge, Aurora at Argonne, and Sierra at Livermore – present performance goals in terms of some factor over current systems. Accordingly, acceptance tests for CORAL will be based on a selected set of applications performing some factor relative to previous systems. Messina says that “capable exascale” also means there’s a decent software stack that is useful for a lot of different types of applications.

Messina shared a working definition of “capable exacale” from a presentation he delivered at the OLCF User Meeting last month:

- A capable exascale system is defined as a supercomputer that can solve science problems 50X faster (or more complex) than on the 20PF systems (Titan, Sequoia) of today in a power envelope of 20-30 MW and is sufficiently resilient that user intervention due to hardware or system faults is on the order of a week on average.

- And has a software stack that meets the needs of a broad spectrum of applications and workloads.

“We need to assure that there are broad societal benefits other than bragging rights,” says Messina. Starting in 2007, the DOE sponsored ten studies intended to prove the mission benefits of exascale computing. Joining in the process were 1,100 stakeholders from across materials science, nuclear energy, high-energy physics, climate studies and other domains. “Every case revealed interesting and important impacts in each of those domains that the participants felt would be enabled by exascale computing power,” observes Messina.

The applications that can be advanced with exascale computing include wind energy, nuclear energy, digital manufacturing, climate science, weather forecasting, and many areas of national security. These are some of the domains with a more obvious benefit to society, says Messina, but there are of course others from fundamental and theoretical domains like astrophysics and quantum chromodynamics, for example.

Taking the case of climate science, exascale computing capability is required for increased realism, number and reliability of model-based climate predictions. Other opportunities include quantification of uncertainty in climate model prediction and more accurate explicit simulation of local to global weather phenomena, including extreme events.

From Giga- to Exa-

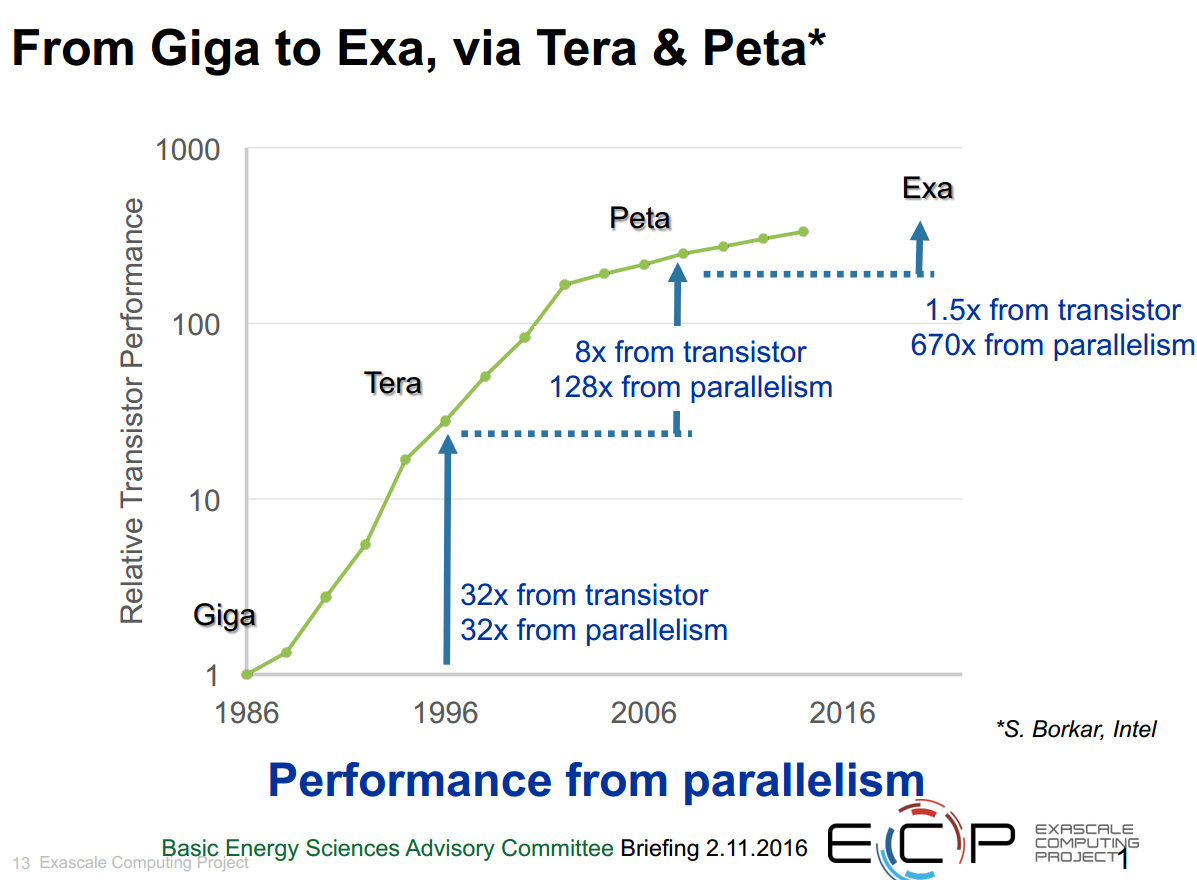

As supercomputing has hit its FLOPS marks from giga-, to tera- to peta- and looking ahead to exascale computing, the performance drivers have shifted. “CMOS technology is flattening out and the amount of speedup from transistor improvements has really dropped, so most of the improvement is from parallelism,” says Messina. The trends are well illustrated in this slide.

Achieving system performance gains over time has grown more challenging with the stalling of Dennard scaling and the end of the “free lunch” situation, where performance increases were a matter of waiting for the next generation of chips. With the trinity of “faster, cheaper, cooler” hardware facing diminishing returns, software is getting increased attention.

Says Messina, a major part of ECP is supporting the development of full-fledged applications that are important to the missions of the DOE and the National Nuclear Security Administration (NNSA) that require exascale computing. ECP is in the process of reviewing proposals that were submitted by the labs to decide which ones are the best candidates for project support. Each application will be tied to a specific goal and a specific problem.

“It’s an important part of the ECP,” observes Messina. “In the past, such projects have focused primarily on some hardware and some of the supporting software, like libraries. That’s important, but we also need to develop simultaneously the applications. It’s only through the applications that we really know what the requirements will be on the supporting software and to some extent on the hardware. And by having a broad set of applications we’ll get the requirements. And that will be part of what we’re funding them for is to interact with the rest of the project.”

Messina reiterates that while the hardware is fairly straight-forward, the software has many dimensions here. “There’s software to have algorithms that are more energy efficient and employ less data movement, algorithms for data management, exascale algorithms and algorithms for discovery, design, and decision,” says Messina.

These and other focus areas are laid out in a 2014 report (from a 2013 study) from the Advanced Scientific Computing Advisory Committee, elucidating the top ten challenges of reaching exascale.

Aside from backing delivery of the required software and hardware technologies necessary for a 2023 exascale machine, the ECP will also contribute to the cost of preparing two or more DOE Office of Science and NNSA facilities to house the coming exascale machines. The labs will, as with previous procurements, be responsible for purchasing the systems; however, given the well-documented exascale challenges, the project is carving out additional funding to supplement facilities work, says Messina.

In alignment with the guiding principles of NSCI, another big part of the ECP mission is to maximize the benefits of HPC for US economic competitiveness and scientific discovery. “One way to think about the economic competitiveness is not just that US industry will be able to use the exascale systems, but that the building blocks of the exascale systems together with the software environment will be such that it will enable affordable, smaller configurations than exascale and that will presumably help a large segment of US industry — companies like General Electric and Boeing for example – but also ones that are not as big.”

As an example of this top-down benefit flow, if an exascale computer consumes 30 MW, a petaflops system should consume only 30 KW, and if the purchase cost of exaflops system is $200 million (the current target), a petaflops computer would cost $200,000.

Integration and co-design is an essential part of the ECP to assure targeted applications will be ready to use the exascale systems productively. Messina reports that co-design is baked into the program. “We expect that the applications teams and the software development teams and the hardware teams will work together to come up with a better design of software, hardware and the applications,” he states. “In addition, though, in the focus area that we call applications development, we are going to fund a small number of co-design centers.”

The DOE funded three co-design centers five years ago, each of which focused on a single application: nuclear energy, combustion, and materials science. The co-design centers in the ECP will be more focused on methods that are used by several applications; for example adaptive mesh refinement (AMR). A co-design center on this area would hook into several applications that require AMR to be efficient. Another example of a potential co-design focus would be particle-in-cell methods. The ECP recently issued an RFP for these co-design centers.

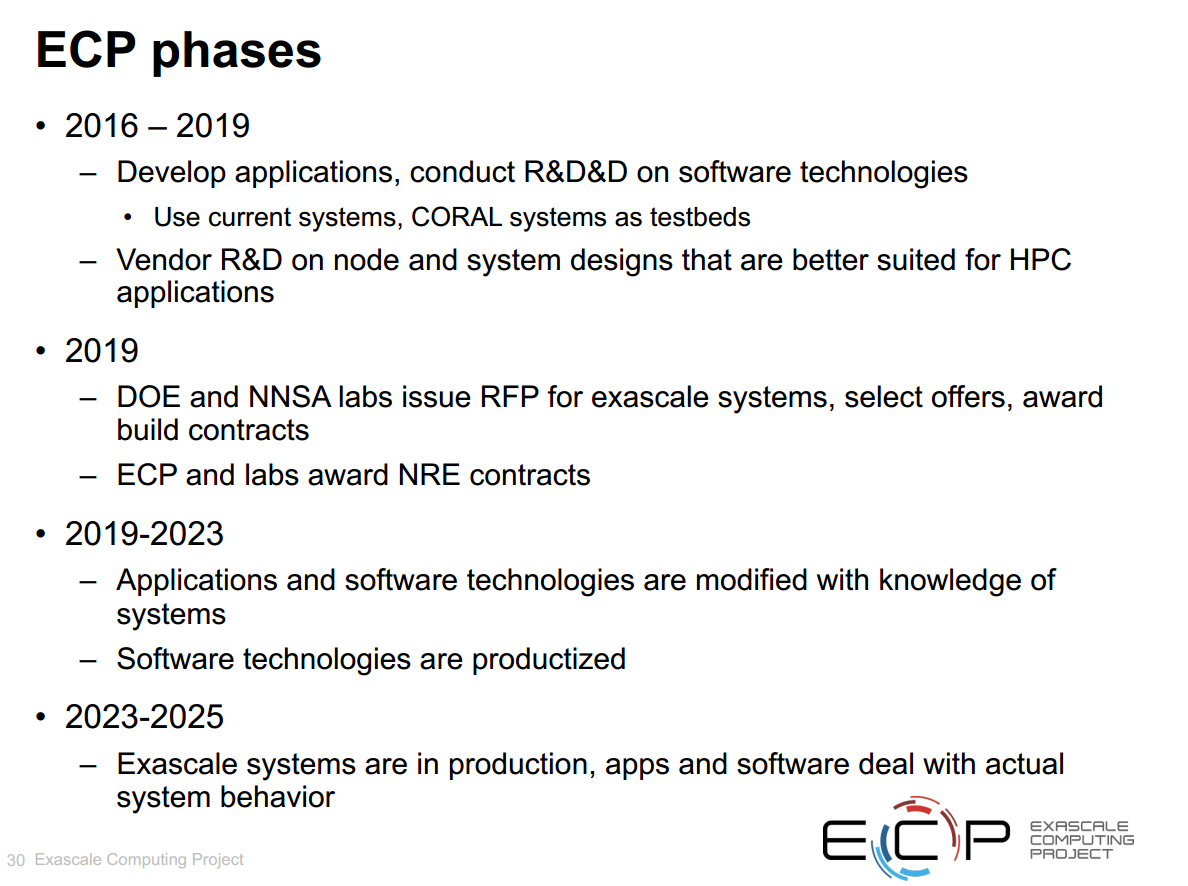

Messina says ECP expects to begin funding on applications and co-design and many of the system software pieces all within this fiscal year, by September 30.

Under PathForward (the successor to DesignForward and FastForward), there will be RFPs put out to vendors to fund R&D on node and system designs. The draft Technical Requirements are posted at http://www.exascaleinitiative.org/pathforward/.

ECP and its partners are aiming to get the hardware contracts signed by the end of FY2016 or within the start of FY2017. In future years, ECP is likely to have RFPs for ISVs and industry is also expected to be involved, says Messina. “There’s quite a few pieces of software that are commercial and we’d like to get them engaged in evolving their products so they will continue to be useful on future systems,” he adds.

In 2019, the ECP will be working closely with the labs to ramp up to exascale and to determine requirements. While the labs that participate in the CORAL partnership will be issuing the RFPs, not the project, ECP will be providing a lot of the useful information, says Messina.

“Once the contracts are awarded, we in the program will have a pretty good idea of what the hardware will be for the first exascale systems,” he continues. “There are often some changes, but most of it we will know, so we can use those three-to-four years to work on the application codes and the software technology codes.” This goal is to achieve a level of robustness and production quality that will result in working systems by 2023.

Dr. Messina will be giving a presentation on the US exascale program at ISC on June 21 from 1:45-2:15 pm local time.

Of course, the US isn’t the only nation coalescing its exascale plans; EU, Japan, and China all have their own programs under way.

Here’s a selection of exascale-relevant ISC sessions:

Exascale Architectures: Disruptions, Denials & Directions

June 21, 2016, 8:30-10:00 am

To Get the Highest Price/Performance/Watt… It’s All about the Memory

08:30 am – 09:00 am

Steve Pawlowski, Micron

Towards Exascale Computing. A Holistic Push through the European H2020 Program

09:00 am – 09:30 am

John Goodacre, University of Manchester

Computing in 2030 – Intel’s View through the Crystal Ball

09:30 am – 10:00 am

Al Gara, Intel

June 21, 2016, 01:45 pm – 03:15 pm

The Path to Capable Exascale Computing

01:45 pm – 02:15 pm

Paul Messina, ANL and ECP

The Next Flagship Supercomputer in Japan

02:15 pm – 02:45 pm

Yutaka Ishikawa, RIKEN AICS

The New Sunway Supercomputer System at Wuxi (China)

02:45 pm – 03:15 pm

Guangwen Yang, National Supercomputer Center at Wuxi

The HPC in Asia session – taking place Wednesday, June 22, from 08:30 am -10:00 am – will offer updates from multiple countries.