A team of Harvard University and MIT researchers report their new neural networking method for monitoring earthquakes is more accurate and orders of magnitude faster than traditional approaches. Their study, published in Science Advances last week, centered on Oklahoma where before 2009 there were roughly two earthquakes of magnitude 3.0 or higher per year; in 2015 the number of such earthquakes exceeded 900.

Earthquake monitoring, particularly for small to medium magnitude earthquakes, has been pushed into the limelight in states where the fracking industry is booming and the related disposal of wastewater has been implicated in the rise in the number of earthquakes. The result has been contentious debate over fracking’s role in the problem and what appropriate steps for regulation and remediation are needed. Not surprisingly, efforts aimed at improving monitoring and understanding of the underlying science have accelerated.

Researchers Thibaut Perol (Harvard), Michaël Gharbi (MIT), Marine Denolle (Harvard) summarize the challenge nicely in their abstract:

“Over the last decades, the volume of seismic data has increased exponentially, creating a need for efficient algorithms to reliably detect and locate earthquakes. Today’s most elaborate methods scan through the plethora of continuous seismic records, searching for repeating seismic signals. We leverage the recent advances in artificial intelligence and present ConvNetQuake, a highly scalable convolutional neural network for earthquake detection and location from a single waveform. We apply our technique to study the induced seismicity in Oklahoma, USA. We detect more than 17 times more earthquakes than previously cataloged by the Oklahoma Geological Survey. Our algorithm is orders of magnitude faster than established methods.”

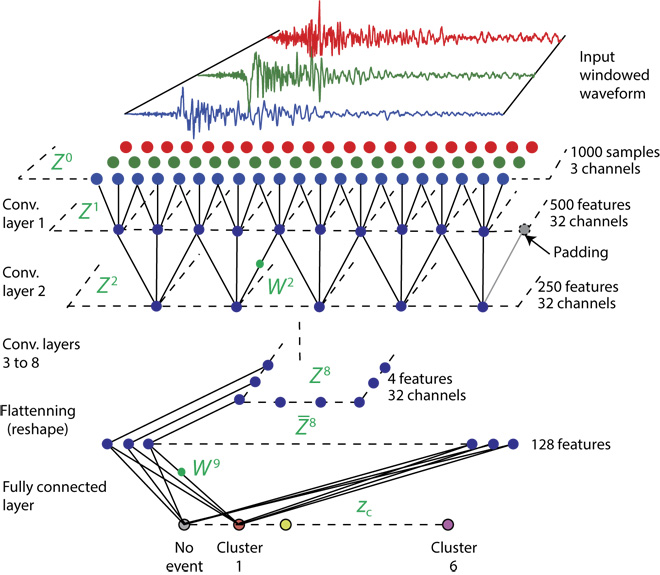

Their model is a deep convolutional network that takes a window of three-channel waveform seismogram data as input and predicts its label either as seismic noise or as an event with its geographic cluster (see figure below).

The computational requirements were substantial. Both the parameter set and training data were too large to fit in memory prompting use of batched stochastic gradient descent algorithm. ConvNetQuake was implemented in TensorFlow and all of the trainings run on Nvidia Tesla K20Xm GPUs. “We trained for 32,000 iterations, which took approximately 1.5 hours,” write the authors who used the Odyssey cluster supported by the Faculty of Arts and Sciences Division of Science, Research Computing Group at Harvard University.

The researchers note that traditional approaches to earthquake detection generally fail to detect events buried in even the modest levels of seismic noise. Waveform autocorrelation is generally the most effective method to identify these repeating earthquakes from seismograms, but the method is computationally intensive and not practical for long time series. One approach to reduce the computation is to select a small set of representative waveforms as templates and correlate only these with the full-length continuous time series.

Bringing machine learning to bear on the problem isn’t new. Recently, an unsupervised earthquake detection method, referred to as Fingerprint and Similarity Thresholding (FAST), has succeeded in reducing the complexity of the template matching approach.

“FAST extracts features, or fingerprints, from seismic waveforms, creates a bank of these fingerprints, and reduces the similarity search through locality-sensitive hashing. The scaling of FAST has shown promise with near-linear scaling to large data sets,” write the researchers.

“FAST extracts features, or fingerprints, from seismic waveforms, creates a bank of these fingerprints, and reduces the similarity search through locality-sensitive hashing. The scaling of FAST has shown promise with near-linear scaling to large data sets,” write the researchers.

Their work poses the problem as one of supervised classification – ConvNetQuake is trained on a large data set of labeled raw seismic waveforms and learns a compact representation that can discriminate seismic noise from earthquake signals. The waveforms are no longer classified by their similarity to other waveforms, as in previous work.

“Instead, we analyze the waveforms with a collection of nonlinear local filters. During the training phase, the filters are optimized to select features in the waveforms that are most relevant to classification. This bypasses the need to store a perpetually growing library of template waveforms. Owing to this representation, our algorithm generalizes well to earthquake signals never seen during training. It is more accurate than state-of-the-art algorithms and runs orders of magnitude faster,” they write. The figure below shows the data sets used.

The limitation of the methodology, they say, is the size of the training set required for good performances for earthquake detection and location. “Data augmentation has enabled great performance for earthquake detection, but larger catalogs of located events are needed to improve the performance of our probabilistic earthquake location approach. This makes the approach ill-suited to areas of low seismicity or areas where instrumentation is recent but well-suited to areas of high seismicity rates and well-instrumented.”

Link to paper: http://advances.sciencemag.org/content/4/2/e1700578/tab-pdf

Link to article discussing the work on The Verge: https://www.theverge.com/2018/2/14/17011396/ai-earthquake-detection-oklahoma-neural-network