In a major refresh of its Z Series chips, IBM is adding on-chip AI acceleration capabilities to allow enterprise customers to perform deep learning inferencing while transactions are taking place to capture business insights and fight fraud in real-time.

IBM unveiled the latest Z chip today (Monday) at the annual Hot Chips 33 conference, which is being held virtually due to the ongoing COVID-19 pandemic. This will be the first Z chip, used in IBM’s System Z mainframes, that won’t follow a traditional numeric naming pattern used in the past. Instead of following the previous z15 chip with a z16 moniker, the new processor is being called IBM Telum (telum is Latin for javelin).

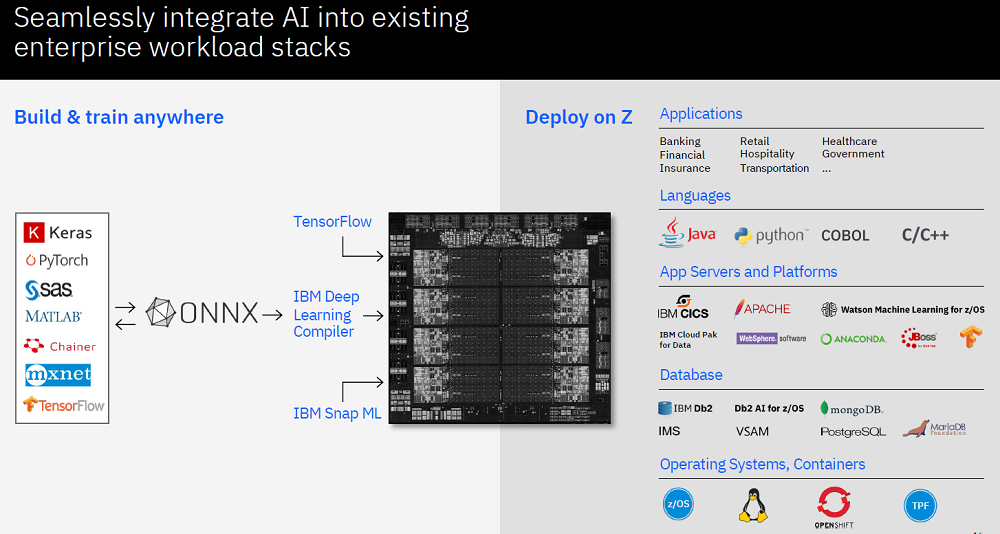

This will be IBM’s first processor to include on-chip AI acceleration, according to the company. Designed for customers across a wide variety of uses, including banking, finance, trading, insurance applications and customer interactions, the Telum processors have been in development for the past three years. The first Telum-based systems are planned for release in the first half of 2022.

One of the major strengths of the new Telum chips is that they are designed to enable applications to run efficiently where their data resides, giving enterprises more flexibility with their most critical workloads, Ross Mauri, the general manager of IBM Z, said in a briefing with reporters on the announcement before Hot Chips.

“From an AI point of view, I have been listening to our clients for several years and they are telling me that they can’t run their AI deep learning inferences in their transactions the way they want to,” said Mauri. “They really want to bring AI into every transaction. And the types of clients I am talking to are running 1,000, 10,000, 50,000 transactions per second. We are talking high volume, high velocity transactions that are complex, with multiple database reads and writes and full recovery for … transactions in banking, finance, retail, insurance and more.”

By integrating mid-transaction AI inferencing into the Telum chips, it will be a huge breakthrough for fraud detection, said Mauri.

“You hear about fraud detection all the time,” he said. “Well, I think we are going to be able to move from fraud detection to fraud prevention. I think this is a real game changer when it comes to the business world, and I am really excited about that.”

Inside the Telum Architecture

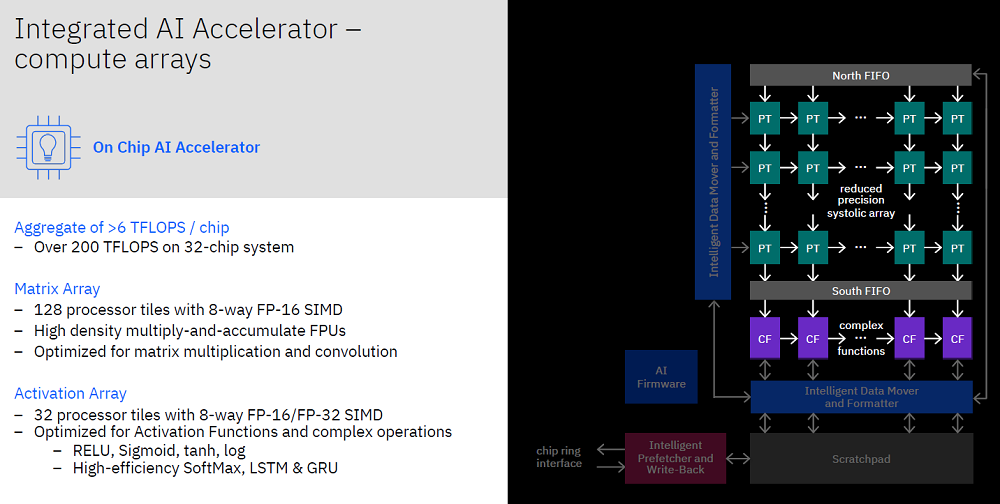

Christian Jacobi, an IBM distinguished engineer and the chief architect for IBM Z processor design, said that the Telum chip design is specifically optimized to run these kinds of mission critical, heavy duty transaction processing and batch processing workloads, while ensuring top-notch security and availability.

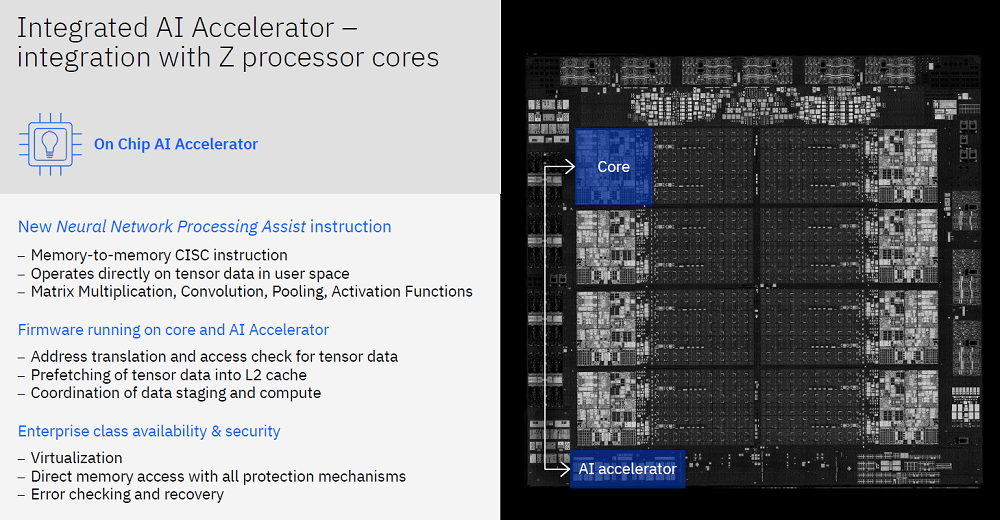

“We have designed this accelerator using the AI core coming from the IBM AI research center,” in cooperation with the IBM Research team, the IBM Z chip design team and the IBM Z firmware and software development team, said Jacobi. It is the first IBM chip created using technology from the IBM Research AI hardware center.

“The goal for this processor and the AI accelerator was to enable embedding AI with super low latency directly into the transactions without needing to send the data off-platform, which brings all sorts of latency inconsistencies and security concerns,” said Jacobi. “Sending data over a network, oftentimes personal and private data, requires cryptography and auditing of security standards that creates a lot of complexity in an enterprise environment. We have designed this accelerator to operate directly on the data using virtual address mechanisms and the data protection mechanisms that naturally apply to any other thing on the IBM Z processor.”

To achieve the low latencies, IBM engineers directly connected the accelerator to the on-chip cache infrastructure, which can directly access model data and transaction data from the caches, he explained. “It enables low batch counts so that we do not have to wait for multiple transactions to arrive of the same model type. All of that is geared towards enabling millisecond range inference tasks so that they can be done as part of the transaction processing without impacting their service level agreements … and have the results available to be able to utilize the AI inference result as part of the transaction processing.

The new Telum chips are manufactured for IBM by Samsung using a 7nm Extreme Ultraviolet Lithography (EUV) process.

The chips have a new design compared to IBM’s existing z15 chips, according to Jacobi. Today’s z15 chips use dual chip modules, but the Telum chips will use a single module.

“Four of those modules will be plugged into one drawer, basically a motherboard with four sockets,” he said. “In the prior generations, we used two different chip types – a processor chip and a system control chip that contained a physical L4 cache, and that system control hub chip also acted as a hub whenever two processor chips needed to communicate with each other.”

Dropping the system control chip enabled the designers to reduce the latencies, he said. “When one chip needs to talk to another chip, we can implement a flat topology, where each of the eight chips in a drawer has a direct connection to the seven other chips in the drawer. That optimizes latencies for cache interactions and memory access across the drawer.”

Jacobi said that the Telum chips will provide a 40 percent performance improvement at the socket level over the company’s previous z15 chips, which is boosted in part by its extra cores.

Each Telum processor contains eight processor cores and use a deep super-scalar, out-of-order instruction pipeline. Running with more than 5GHz clock frequency, the redesigned cache and chip-interconnection infrastructure provides 32MB cache per core and can scale to 32 Telum chips. The dual-chip module design contains 22 billion transistors and 19 miles of wire on 17 metal layers.

About That Z Series Name Change

The upcoming Telum chips will show up in IBM’s Z Series and LinuxONE systems as the main processor chip for both product lines, said IBM’s Mauri. They are not superseding the Z Series chips, he said.

So, does that mean that z16, z17 and other future Z chips are no longer on the roadmap?

“No, said Mauri. “It just means that we never named our chips before. We are naming it this time because we are proud of the innovation and breakthroughs and think that it is unique in what it does. And that is the only thing. I think z15 will still be around and there will be future generations, many future generations.”

Read the full version of this story with analyst insights at sister publication EnterpriseAI.news.