Intel’s paring projects and products amid financial struggles, but AI products are taking on a major role as the company tweaks its chip roadmap to account for more computing specifically targeted at artificial intelligence.

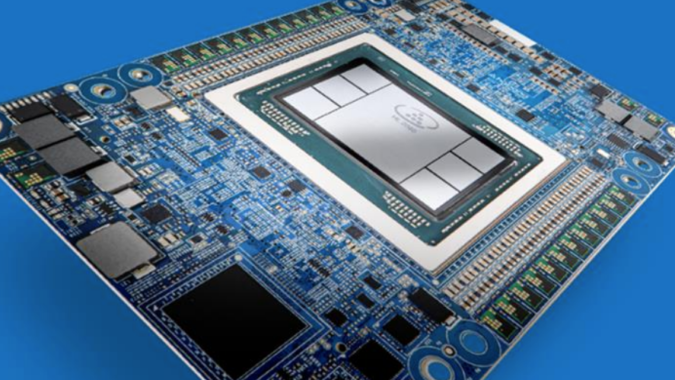

One product that will not be cut is Habana Labs’ Gaudi AI product line, with product development for future versions of the chips is in full swing. Gaudi could also make it to other Intel chips alongside the company’s GPUs.

Intel is preparing to share more details on Gaudi3 for artificial intelligence later this year, said Eitan Medina, chief operating officer at Habana Labs, which is a division of Intel, in an interview with Cambrian AI Research, which was posted to YouTube on Saturday.

“You can expect not too long after that we will be making it available for customers. We have a strong roadmap for Gaudi architecture,” Medina said.

Medina shared some information on the new Gaudi3 chip. It will be made using the 5nm process, and is a process shrink of Gaudi2, which was made using the 7nm process. Gaudi3 will have much more memory, compute and networking than its predecessor, Medina said.

An Intel spokesman said Gaudi3 will have upgrades to memory and connectivity, alongside other new features. The company declined to share additional details on the chips or shipment dates.

The IT market is at a crossroads with inflationary measures and poor economic outlook for 2023 curbing IT spending, Gartner said in a study released earlier this month.

Spending on datacenter systems, which are handling AI workloads, is expected to be $213 billion, growing by just 0.7% in 2023, compared to the 12% growth in 2022 when compared to 2021. Total IT spending for 2023 will decline by 0.2% to $4.5 trillion compared to 2022, compared to 2.4% growth in 2022 from 2021, according to Gartner’s forecasting.

IDC last year estimated worldwide spending on AI systems to be $118 billion in 2022, and to exceed $300 billion by 2026. Companies are looking to cut costs, and AI provides techniques to to streamline processes and efficient ways to conduct business, IDC said in a release.

“AI systems can support people-oriented tasks and improve their capabilities through technologies such as Conversational AI and Image Processing, used to interact with clients and potential clients in a way that these people are prepared to accept,” said Mike Glennon, senior market research analyst at IDC.

Audiences have embraced AI through technologies like ChatGPT, which has taken the world by storm and shown the transformational potential of AI. Since the introduction of ChatGPT last year, the servers have sometimes been at full capacity and inaccessible to users. That highlights the need for faster AI chips, and Gaudi has shown potential to handle large language models powering applications like ChatGPT.

Gaudi2 showed mettle in handling training models in benchmarks published by MLCommons in November. The AI chip posted better training times for BERT – which is for large language models – than Nvidia’s A100 chips, but lagged the latest H100, which is based on Hopper architecture. The Gaudi2 chip had not been optimized for BERT.

The chipmaker may also cross-pollinate its diverse AI chip lines with the successor to Gaudi3.

“We are also working to identify opportunities … to combine the best of both worlds in both the Intel GPU architecture as well as the Habana Gaudi architecture. How to make the best of worlds when we think about the fourth generation,” Medina said.

The idea of integrating Habana could include taking elements of the GPU codenamed Ponte Vecchio, which is targeted at high-performance computing.

Gaudi is one of many Intel AI chips that the company has made available to customers in different markets. Gaudi3 is targeted at deep learning in enterprise computing. The Ponte Vecchio GPU is targeted at high-performance computing and AI applications in supercomputing environments. The company’s FPGAs are also being used for AI.

But no single Intel AI chip has been able to match the runaway success of Nvidia’s GPUs. The Gaudi2 chip was delayed, but is now available on Intel’s Developer Cloud to test and prototype applications.

The Gaudi chips “address an industry gap by providing customers with high-performance, high-efficiency deep learning compute choices for both training workloads and inference deployments in the data center while lowering the AI barrier to entry for companies of all sizes,” Intel said in its annual report published last week.

“The Habana Gaudi chip is important to them for training with the best power and cost efficiency. They have customers using it, but haven’t revealed the extent yet. I’d say it is an important alternative to GPUs,” said Kevin Krewell, principal analyst at Tirias Research.

Intel is throwing a number of AI chips into the market, and is providing many options to customers testing out emerging AI models. Customers typically choose chips that are optimized to their AI training model.

Consulting firm McKinsey has pegged the AI market to be worth $1 trillion by 2030, but also said it was in an experimental and in early phases of commercial deployment. As an example, in the industrial sector, implementations are still focused on how the technology can improve on conventional problem-solving approaches, McKinsey said.

Intel’s AI hardware development was previously concentrated around a division called AXG, which developed high-performance and AI accelerators. The division was recently restructured, with its head, Raja Koduri, reassigned to a tech design role. The Habana Labs unit, which is based in Israel, operates as an independent unit.

Intel has many other AI projects underway. The Sapphire Rapids chips implements AI specific acceleration blocks including technology called AMX (Advanced Matrix Extensions), which provides on-chip inferencing and addresses challenges in AI and machine learning processing by speeding up data movement and compression. The acceleration inside the CPU allows for efficient matrix multiplications. AMX supports the INT8 and Bfloat16 data types as well as 32-bit and 64-bit floating point operations. The company has claimed that AMX can help the chip achieve up to 10 times higher PyTorch real-time inference and training performance.

Beyond Nvidia, Intel will have to contend with rivals such as AMD, which is implementing Xilinx offerings such as FPGAs and ASICs as a core part of its offerings. AMD has developed an AI architecture called XDNA, which was first integrated into an AI accelerator called Alveo V70 (which is more of an inferencing chip). AMD earlier this year showed off its latest MI300 chip, which is an integrated chip with GPU acceleration for high-performance computing and machine learning.

While Intel will continue to back Gaudi, other projects are being discontinued. The company scrapped plans for a $700 million datacenter research facility in Hillsboro, Oregon, as part of its ongoing cost-cutting initiative, which also involves laying off employees. Intel also discontinued its Pathfinder for RISC-V accelerator program, which gave companies access to RISC-V chip development tools. Intel last year said it would pump $1 billion to help companies develop chips and software based on the RISC-V architecture.

Intel has also discontinued development of some networking gear being developed by the Network and Edge group, which is also called NEX.

“NEX continues to do well and is a core part of our strategic transformation, but we will end future investments in our network switching product line, while still fully supporting existing products and customers. Since my return, we have exited seven businesses, providing in excess of $1.5 billion in savings,” said Intel’s CEO Pat Gelsinger, during the company’s most recent earnings call in late January.

Intel is also trimming its research and development spending, which has progressively gone up since Pat Gelsinger took over as CEO in 2021. The R&D spending in 2022 was $17.5 billion, up from $15.2 billion in 2021. The company now expects a “$400 million decrease in R&D expenses” in 2023, the company said in its business outlook published last week after the fourth quarter earnings call.