At the hybrid Intel Vision event today, Intel’s Habana Labs team launched two major new products: Gaudi2, the second generation of the Gaudi deep learning training processor; and Greco, the successor to the Goya deep learning inference processor. Intel says that the processors offer significant speedups relative to their predecessors and the competition. Gaudi2 processors are now available to Habana’s customers, while Greco will begin sampling to select customers in the back half of the year.

Habana Labs was founded in 2016 with the aim of creating world-class AI processors, and just three years later was snatched up by Intel for a cool $2 billion. Those aforementioned first-generation Goya inference processors debuted when Habana emerged from stealth in 2018, while the first-generation Gaudi training processors launched in 2019, right ahead of the acquisition by Intel.

The announcements thus mark a major milestone for the now-Intel-owned team: while Gaudi and Goya had been made available in a variety of form factors over the last few years, these are the first new processors released by Habana Labs since its acquisition.

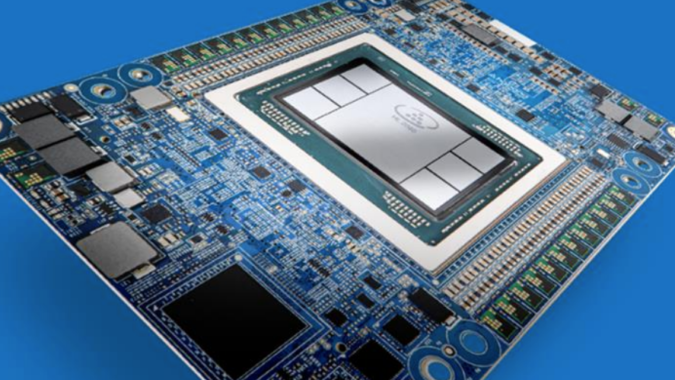

Both Gaudi2 and Greco made the leap from 16nm to 7nm process (via TSMC, the manufacturer for both). In Gaudi2’s case, the 10 Tensor processor cores present in the first-gen Gaudi training processor have increased to 24, while the in-package memory capacity has tripled from 32GB (HBM2) to 96GB (HBM2E) and the on-board SRAM has doubled from 24MB to 48MB. “That’s the first and only accelerator that integrates such an amount of memory,” Eitan Medina, COO of Habana Labs, said of the HBM2E in Gaudi2. The processor has a TDP of 600W (compared to 350W for Gaudi) but, Medina said, still uses passive cooling and does not require liquid cooling.

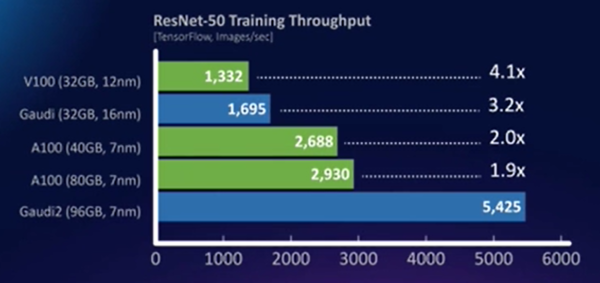

Intel showed a few comparisons between Gaudi2, its predecessor, and the competition on a handful of popular tasks. On ResNet-50, for instance, Gaudi2 achieved 3.2× the output of Gaudi, 1.9× that of an 80GB Nvidia A100 and 4.1× that of an Nvidia V100. On some other benchmarks, the gap between Gaudi and the 80GB A100 was even more pronounced: for BERT Phase-2 training throughput, Gaudi-2 bested the 80GB A100 by a factor of 2.8×. “Comparing to both [the] V100 and A100 is important because both are actually heavily used in the cloud and on-prem,” explained Medina.

Interestingly, Gaudi2 also adds support for FP8 in order to (per Medina) “enable faster training and better utilization of memory for very large models.” FP8 also made an appearance in Nvidia’s Hopper announcement back in March, and Tesla’s internal supercomputers support CFP8 (“configurable FP8”).

Gaudi2, which is now available to Habana customers, is available in a mezzanine card form factor and as part of the HLS-Gaudi2 server, which is intended to support customer evaluations of Gaudi2. The server is equipped with eight Gaudi2 cards and a dual-socket Intel Xeon subsystem. For more substantive deployments, Habana is partnering with Supermicro to bring a Gaudi2-equipped training server (the Supermicro Gaudi2 Training Server) to market in the second half of 2022 and working with DDN to develop a variant of the Gaudi Training Server augmented with DDN’s AI-focused storage. Further, a thousand Gaudi2s have already been deployed to Habana’s datacenters in Israel, where they are being used for software optimization and to advance development of the Gaudi3 processor.

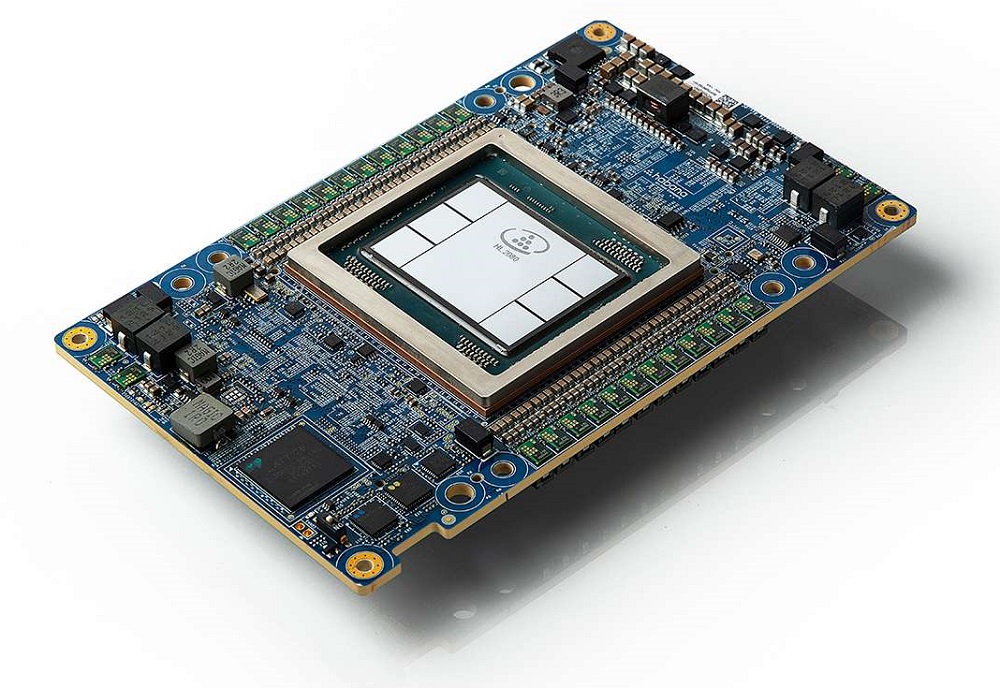

Next: Greco, successor to the Goya inference processor. “Greco takes the same highly efficient Goya to 7nm, essentially doing the same thing we’ve done with Gaudi2,” Medina said. “We’ve boosted the memory on-card from DDR4 to LPDDR5, essentially getting 5× the bandwidth, and also pushing the on-chip memory from 50 to 128MB.”

Greco moves from a dual-slot to a single-slot form factor, dropping the TDP from 200W to 75W. “That compact form factor will allow customers to actually double the number of accelerators in the same host system,” Medina said. Otherwise, Intel didn’t reveal quite as many details about Greco, which is slated to ship in the back half of the year, as it did about Gaudi2.

Gaudi2 and Greco serve as the latest entries in an increasingly crowded AI accelerator arms race, with stiff competition not only from Nvidia’s GPUs, but also from other specialized accelerators like those from Cerebras, Graphcore and SambaNova. Intel’s comparisons between its Habana products and Nvidia products, of course, also come with a significant asterisk—they don’t include comparisons against Nvidia’s forthcoming H100 GPUs, which promise substantial speedups relative to the A100s and are slated for shipment in Q3 2022. Habana even faces a bit of internal competition from products like Intel’s forthcoming Ponte Vecchio GPUs, which are also advertised as high-performance accelerators for AI workloads.

“Intel sees Habana as a complementary technology to the Ponte Vecchio GPU for processing AI at scale, and the new version [of Gaudi] looks pretty impressive,” said Karl Freund, founder and principal analyst of Cambrian-AI Research, in a comment to HPCwire. “Nvidia Hopper has a specialized engine for Transformer models, however, and we will have to get more performance data from both companies to properly compare.”

“I think that Intel is making an important distinction as to the role these two processors play, albeit maybe a slightly fuzzy one if you’re not looking at the details,” added Peter Rutten, research vice president with IDC’s Worldwide Infrastructure Practice, in another comment to HPCwire. “Ponte Vecchio is for running large-scale HPC, AI, and graphics workloads that require mixed precision as well as scalability and flexibility. This is useful for experimentation, for example. For AI, you will not get the relentless price-performance that an ASIC like Gaudi2 can offer at scale, but you will have a scalable, flexible environment within the Xe family.”

Intel’s Sandra Rivera, executive vice president and general manager of the Datacenter and AI Group, summarized the launch of the new Habana processors as a “prime example of Intel executing on its AI strategy to give customers a wide array of solution choices—from cloud to edge—addressing the growing number and complex nature of AI workloads.”