If you need a reason to join the Periscope craze, how about getting an inside look at the fastest supercomputer in the United States? Today staff at the Oak Ridge Leadership Computing Facility (OLCF), the DOE Office of Science user facility at Oak Ridge National Laboratory (ORNL) took the Periscope audience on a tour of Titan, the world’s second largest supercomputer, built by Cray.

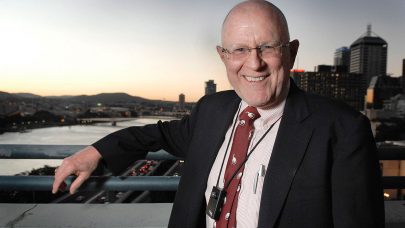

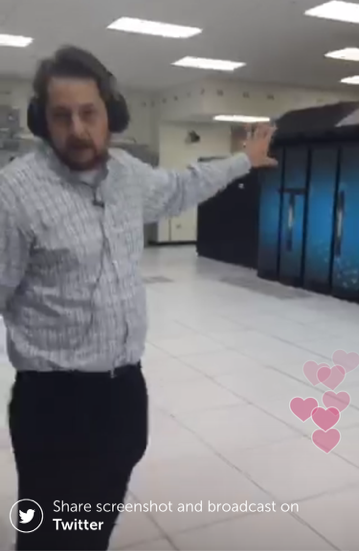

Conducting the tour were Bronson Messer, senior scientist in the Scientific Computing and Theoretical Physics Groups at ORNL, and Justin Whitt, deputy director at OLCF.

As Messer explained, the staff at OLCF undertakes numerical experiments and simulations that can’t be been done anywhere else on the entire planet and couldn’t be done without a supercomputer like Titan. These are large-scale climate simulations, combustion simulations, and stellar astrophysics simulations. “All of these things require prodigious use of computing power and it’s a good thing we have Titan around,” said Messer, himself a computational astrophysicist at the National Center for Computational Sciences.

“Rated at 27-petaflops of peak compute [17.59-petaflops LINPACK], Titan is an order of magnitude improvement on the machines we had previously,” he continued. “The machine room is split into two sides, one side supercomputers — the other side mostly spinning disk drives, important for things like climate and astrophysics and combustion simulations, as well as any kind of nuclear simulation because ultimately you want to do computations on the supercomputers, be able to save the data on the disk drives and look at the data coming off those disk drives, visualize it, analyze it, and actually obtain science from all that number crunching that you’ve done.”

To do all of this as anyone in supercomputing knows requires a datacenter like that that houses Titan, which sits in a nearly half-acre machine room, along with a lot of supporting infrastructure.

Deputy Director Justin Whitt continues the tour of the 20,000 square foot room, which he compares to the area of four basketball courts, except this room has a 100 gigabit connection to the outside world allowing for massive data movement. There’s also about 20MW of power going into the room of which Titan uses about 6MW.

Before entering the area through a controlled access point, the camera crew along with Whitt put on hearing protection on account of the humming processors and mostly the fan noise, which reaches 90 decibels and even higher in some areas.

Whitt proceeds to take the Periscope audience on a tour of the room, first mentioning the tile floor, which provides the inlet for traditional HVAC forced cooling that keeps the ambient temperatures in the upper 60s.

The datacenter is roughly divided into two areas — with data storage on one side and computers themselves on the other side, but from the start, one gets a view of the front row of Titan, one of several rows that make up this massive machine. In total, Titan comprises 200 cabinets, containing 18,688 compute nodes (4 nodes per blade, 24 blades per cabinet). Each of those nodes has a CPU and a GPU. Around the edges of the room, Whitt points out the supporting electrical and mechanical infrastructure required to operate Titan.

Whitt reiterates that Titan is roughly an order of magnitude more powerful than its predecessor, yet realizes that computational power while staying within roughly the same energy footprint. He attributes much of this nearly 5X increase in energy efficiency to the heterogenous nature of the machine and the transitioning from the traditional CPU-only approach to using general-purpose graphics processors (GPGPUs).

“These are the same kind of chips used and optimized for gaming,” he notes. “This allows us to have the CPU direct some of the traffic to GPUs, which can execute thousands of tasks at once for certain types of calculations. Because of this we realize a lot of power and speed and efficiency from the GPU. They are extremely power efficient.”

Stopping on the edge of the row, Whitt points out the cabinets that control the cooling for Titan, both the liquid and air cooling, part of a massive advanced cooling system developed by Cray.

The cooling system uses two cooling loops, one filled with a refrigerant (R-134a), and the other with chilled water. Hoods at the top of the machine reveal stainless steel pipes that circulate the R-134a coolant. There’s forced fans at the bottom that circulate and push the air up about 3,000 cubit feet per minute. That brings it up and the heat transfers from the machines to a heat exchanger into a coolant. The heat exchange is enough to actually boil the coolant, turning it from a liquid to a gas. Then the coolant is circulated back using a pump and a chilled water system converts it back from a gas to a liquid. Whitt informs the audience that the delta t or change in temperature is 35 degrees Fahrenheit from top to bottom, with another delta t of about 13 degrees Fahrenheit from the chilled water.

Whitt opens up a cabinet to reveal thick pipes on both sides, the source and return cooling. There’s also high-speed network that connects all the nodes together.

Then he heads down a cooling aisle to take look at some of the storage units, including Atlas, which are taking advantage of the contained aisle cooling. Whitt says they’re encapsulating places in the datacenter where they combine the cooling where it’s most needed for efficiency. Going through a sliding door, he points out how the floor grates are opened up so they can force the the air through.

In addition to Atlas, which provides about 30PB of disk storage, the datacenter also contains about 30PB of tape storage that allows them to archive critical data.

“The computations are very expensive,” says Whitt. “This is a finite resource, expensive to deploy and operate. A lot of these datasets that are generated, say you’re simulating air flow over a wing, or you’re looking at molecular interactions, when you simulate that you get results that makes your model, that model has a very high cost associated with it, potentially. We want to make sure that when you simulate that model then has a very high cost you want to make sure that those that are critical to a certain community don’t have to recompute that – so we have this 30PB of archive tape storage.”

From there Whitt takes his Periscope followers out the back side of the storage aisle into the relative extreme quiet of an exterior hallway. He rejoins Messer who explains that some of the largest multi-physics simulations can take weeks, months or even a fraction of a year to complete — using anywhere from a fifth to up to half the machine. But on average, they say that it’s not unusually to have week-long simulations.

Since this virtual tour was aimed at a more general audience, Messer also takes a moment to explain the basics of what is meant by “flop” and “petaflop.” He notes that a really quick person can carry out an add or multiply calculation on two numbers with decimal points within about a second, effectively carrying out one floating point operation per second, while an average laptop or desktop computer is capable of about 2-3 gigaflops. Titan, with a peak performance of 27 petaflops is theoretically capable of 27 quintillion operations per second. This is difficult to put in human-scale terms, says Messer, but if we consider that there have been roughly 11 billion people who have ever lived and each of those did one floating point operation per second for their whole life, they each would have lived over 600 years to get to 27 petaflops. In fact, all this potential calculation doesn’t even equal one day on Titan, he observes.

“It’s as exciting as you might think to work on the second-largest supercomputer in the world,” Messer adds. “For a computational astrophysicist there’s probably no place else on the planet I rather be.”

Remember, videos recorded on the vertically-themed video app Periscope generally disappear after about 24 hours, but if you missed this one, you’re in luck, since the video is also available on ORNL’s YouTube channel.