As expected, Intel officially announced its 5th generation Xeon server chips codenamed Emerald Rapids at an event in New York City, where the focus was really on its new AI laptops.

The bad news for HPC customers is that there will be no version of the Xeon CPU Max chip with the Emerald Rapids CPU. Instead, Intel will continue to sell Xeon Max — which has integrated high-bandwidth memory of up to 64GB — with the previous 4th Gen Sapphire Rapids chip.

“We still continue to have customers that are either deploying or investigating deployments today on the existing Max,” said Ronak Singhal, senior fellow at Intel and chief architect of Xeon chips.

Intel is not upgrading Max with Emerald Rapids as it is still gauging customer interest in the Max chip. The company came back from Supercomputing 2023 and saw interest in the bandwidth-intensive chips, but Emerald Rapids is serving a different audience.

“When we created Xeon Max, it was HPC first, but now you see the rise of things like LLMs and the benefit from the bandwidth,” Singhal said.

Intel’s roadmap has no mention of a next-generation Xeon Max CPU. The roadmap points to the next-generation Xeon chip codenamed Granite Rapids, which is due out next year and which Intel could integrate with HBM.

Intel’s next GPU, Falcon Shores, is due out in 2025, and the chip maker will need to pair it with a new server CPU, which could likely be the successor to Granite Rapids. But a lot could change on the memory and chip design front with new technologies emerging quickly.

But Emerald Rapids — which is targeted at the mainstream server audience — improves the memory capacity and can spin up vast memory capacity with support for CXL 1.1, which provides the hooks for memory and storage expansion. Memory capacity has become paramount with AI and other compute-intensive applications.

The server chip is more of an incremental improvement to the 4th Gen Xeon server chips codenamed Sapphire Rapids. Emerald Rapids provides between 1.13x to 1.69x percent speed boosts, depending on the application, and up to 34% improvement in power efficiency.

Emerald Rapids is the last of the Intel legacy Xeon chip designs, and the company’s focus is clearly on the next-generation Granite Rapids, which is a ground-up redesign of the server product.

Due out next year, Granite Rapids isn’t far behind Emerald Rapids. It will have a chiplet design, be made on an advanced manufacturing (Intel 3) process, and have the new APX Instruction set. It will also boast significant performance improvements.

But Emerald Rapids isn’t completely irrelevant — in fact, it will be a very important chip for customers looking to upgrade data centers on the current software and hardware stack, said Ronak Singhal, Intel Fellow and chief architect of Xeon chips.

Customers looking for server chip upgrades in existing infrastructure will see significant performance improvements with Emerald Rapids. Customers are looking to replace older 3rd-Gen and 2nd-Gen Xeon chips and will likely jump to Emerald Rapids.

Sapphire Rapids chips are already selling in the millions as hyperscalers and customers upgrade infrastructures for AI, setting up a healthy market for Emerald Rapids. Intel has already shipped its millionth Sapphire Rapids chip this quarter and hopes to surpass 2 million units, Intel CEO Pat Gelsinger said last month.

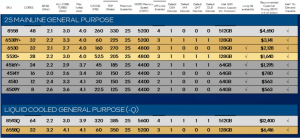

The top-line Emerald Rapids chip, the 5th gen Xeon 8592+, will have 64 cores, an improvement from the 60 cores in Sapphire Rapids. The chip will operate at a 1.9GHz frequency that can max out at 3.9GHz in turbo mode. It has 320MB of cache, draws 350 watts of power, and fits into two-socket systems. It costs a whopping $11,600.

Emerald Rapids includes 5600MT/s of DDR5 memory bandwidth, an improvement from 4800MT/s in Sapphire Rapids. The chip will also make possible for the first time some real world implementations of the Compute Express Link 1.1 interface (CXL), Singhal said.

For the CXL implementation, the chip supports eight channels of native-attached memory that customers can define four additional ranges that can populated behind CXL. Software manages and supports the memory channels.

“So you’re going to get 12 channels… of memory, and it is just exposed as a single range,” said Sailesh Kottapalli, a senior fellow at Intel.

The bandwidth expansions support exposure to more memory channels around the CXL implementation, which ensures “we don’t have a lot of data left behind,” Kottapalli said.

The new chip was broken into two logical blocks, which is an improvement from the four logical clusters in Sapphire Rapids. With Emerald Rapids, the two logical clusters are broken up into two-way 32-core blocks so VMs can be scheduled for multitenant usages and still provide more efficient utilization and power efficiency, Kottapalli said.

The performance benefits of Emerald Rapids are not much to write home about. On average, the chip is 1.21 times faster on application performance than Sapphire Rapids but 1.84 times faster than 3rd Gen Xeon chips, a more relevant metric for customers looking to upgrade servers.

The inferencing AI improvements on Emerald Rapids were between 1.1 and 1.44 times faster compared to Sapphire Rapids for the BFloat16 data type. The new chip has many of the same AI features, including the AMX instruction set introduced in Sapphire Rapids.

Intel was able to improve the frequency for heavy AVX usages by about 10% and up to 10% for AMX usages, depending on the different profiles.

Intel also did a lot of work to deliver power efficiency improvements when servers are idle and cores are not operating. Intel introduced an optimized power mode with Sapphire Rapids, and architectural changes have led to power efficiency improvements with Emerald Rapids.

Kottapalli claimed 100 watts of saving per socket when in idle mode.

“It is quite common that systems generally run at 25 to 50% of the utilization. They don’t necessarily run that 100%. None of the infrastructure providers really want to provision for 100%, 75% is usually about the peak,” Kottapalli said.

Emerald Rapids also has security features such as TDX (Trust Domain Extensions), which creates a secure enclave to run applications. TDX prevents theft of data as it moves inside chips. Security typically slows down the performance of chips, but Singhal said the performance penalty is in the single digits.

The chip is also capable of on-demand capabilities, in which cloud providers can theoretically shut off features and charge an extra fee to switch on those features.

Intel also talked extensively about its Gaudi chips, which are taking on a more important role as it tries to catch up with Nvidia in AI training and inferencing. The Gaudi2 chip is now shipping, with Gaudi 3 coming next year.